mirror of

https://github.com/sigp/lighthouse.git

synced 2026-05-07 00:42:42 +00:00

Web3Signer support for VC (#2522)

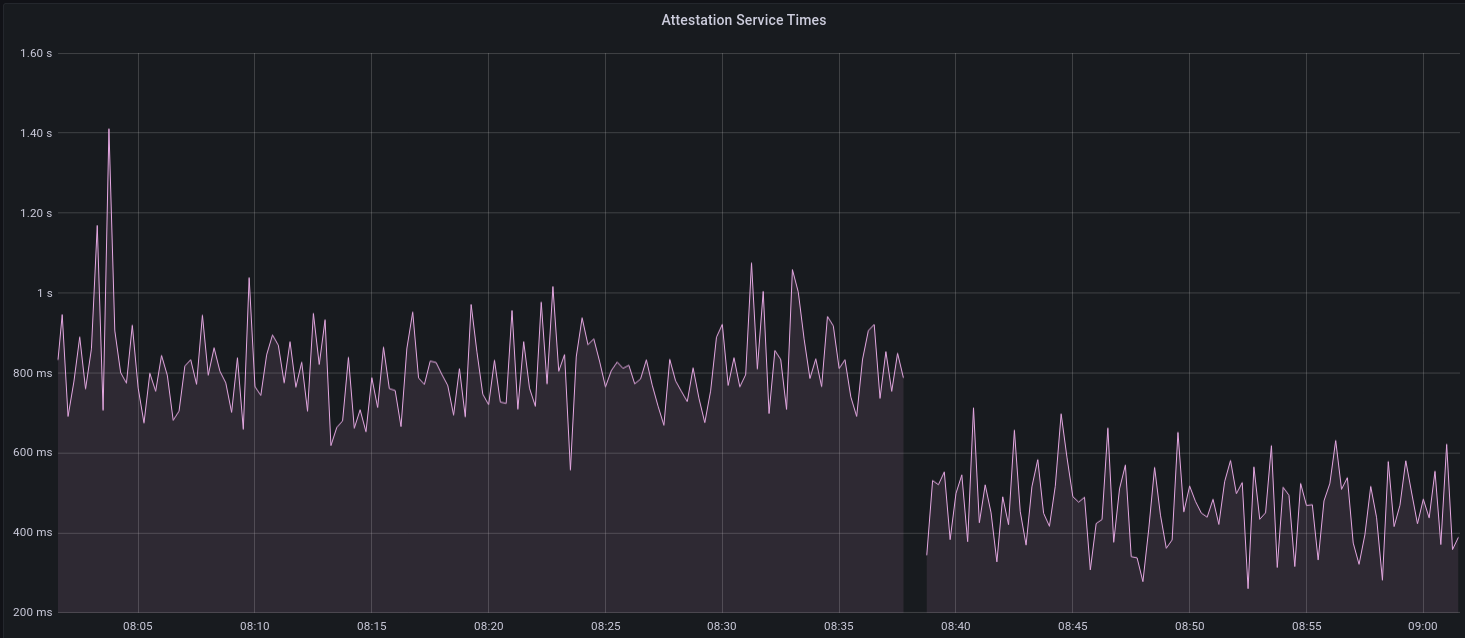

[EIP-3030]: https://eips.ethereum.org/EIPS/eip-3030 [Web3Signer]: https://consensys.github.io/web3signer/web3signer-eth2.html ## Issue Addressed Resolves #2498 ## Proposed Changes Allows the VC to call out to a [Web3Signer] remote signer to obtain signatures. ## Additional Info ### Making Signing Functions `async` To allow remote signing, I needed to make all the signing functions `async`. This caused a bit of noise where I had to convert iterators into `for` loops. In `duties_service.rs` there was a particularly tricky case where we couldn't hold a write-lock across an `await`, so I had to first take a read-lock, then grab a write-lock. ### Move Signing from Core Executor Whilst implementing this feature, I noticed that we signing was happening on the core tokio executor. I suspect this was causing the executor to temporarily lock and occasionally trigger some HTTP timeouts (and potentially SQL pool timeouts, but I can't verify this). Since moving all signing into blocking tokio tasks, I noticed a distinct drop in the "atttestations_http_get" metric on a Prater node:  I think this graph indicates that freeing the core executor allows the VC to operate more smoothly. ### Refactor TaskExecutor I noticed that the `TaskExecutor::spawn_blocking_handle` function would fail to spawn tasks if it were unable to obtain handles to some metrics (this can happen if the same metric is defined twice). It seemed that a more sensible approach would be to keep spawning tasks, but without metrics. To that end, I refactored the function so that it would still function without metrics. There are no other changes made. ## TODO - [x] Restructure to support multiple signing methods. - [x] Add calls to remote signer from VC. - [x] Documentation - [x] Test all endpoints - [x] Test HTTPS certificate - [x] Allow adding remote signer validators via the API - [x] Add Altair support via [21.8.1-rc1](https://github.com/ConsenSys/web3signer/releases/tag/21.8.1-rc1) - [x] Create issue to start using latest version of web3signer. (See #2570) ## Notes - ~~Web3Signer doesn't yet support the Altair fork for Prater. See https://github.com/ConsenSys/web3signer/issues/423.~~ - ~~There is not yet a release of Web3Signer which supports Altair blocks. See https://github.com/ConsenSys/web3signer/issues/391.~~

This commit is contained in:

@@ -5,6 +5,7 @@ use crate::{

|

||||

validator_store::ValidatorStore,

|

||||

};

|

||||

use environment::RuntimeContext;

|

||||

use futures::future::join_all;

|

||||

use slog::{crit, error, info, trace};

|

||||

use slot_clock::SlotClock;

|

||||

use std::collections::HashMap;

|

||||

@@ -288,7 +289,7 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

// Then download, sign and publish a `SignedAggregateAndProof` for each

|

||||

// validator that is elected to aggregate for this `slot` and

|

||||

// `committee_index`.

|

||||

self.produce_and_publish_aggregates(attestation_data, &validator_duties)

|

||||

self.produce_and_publish_aggregates(&attestation_data, &validator_duties)

|

||||

.await

|

||||

.map_err(move |e| {

|

||||

crit!(

|

||||

@@ -350,10 +351,11 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

.await

|

||||

.map_err(|e| e.to_string())?;

|

||||

|

||||

let mut attestations = Vec::with_capacity(validator_duties.len());

|

||||

|

||||

for duty_and_proof in validator_duties {

|

||||

// Create futures to produce signed `Attestation` objects.

|

||||

let attestation_data_ref = &attestation_data;

|

||||

let signing_futures = validator_duties.iter().map(|duty_and_proof| async move {

|

||||

let duty = &duty_and_proof.duty;

|

||||

let attestation_data = attestation_data_ref;

|

||||

|

||||

// Ensure that the attestation matches the duties.

|

||||

#[allow(clippy::suspicious_operation_groupings)]

|

||||

@@ -368,7 +370,7 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

"duty_index" => duty.committee_index,

|

||||

"attestation_index" => attestation_data.index,

|

||||

);

|

||||

continue;

|

||||

return None;

|

||||

}

|

||||

|

||||

let mut attestation = Attestation {

|

||||

@@ -377,26 +379,38 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

signature: AggregateSignature::infinity(),

|

||||

};

|

||||

|

||||

if let Err(e) = self.validator_store.sign_attestation(

|

||||

duty.pubkey,

|

||||

duty.validator_committee_index as usize,

|

||||

&mut attestation,

|

||||

current_epoch,

|

||||

) {

|

||||

crit!(

|

||||

log,

|

||||

"Failed to sign attestation";

|

||||

"error" => ?e,

|

||||

"committee_index" => committee_index,

|

||||

"slot" => slot.as_u64(),

|

||||

);

|

||||

continue;

|

||||

} else {

|

||||

attestations.push(attestation);

|

||||

match self

|

||||

.validator_store

|

||||

.sign_attestation(

|

||||

duty.pubkey,

|

||||

duty.validator_committee_index as usize,

|

||||

&mut attestation,

|

||||

current_epoch,

|

||||

)

|

||||

.await

|

||||

{

|

||||

Ok(()) => Some(attestation),

|

||||

Err(e) => {

|

||||

crit!(

|

||||

log,

|

||||

"Failed to sign attestation";

|

||||

"error" => ?e,

|

||||

"committee_index" => committee_index,

|

||||

"slot" => slot.as_u64(),

|

||||

);

|

||||

None

|

||||

}

|

||||

}

|

||||

}

|

||||

});

|

||||

|

||||

let attestations_slice = attestations.as_slice();

|

||||

// Execute all the futures in parallel, collecting any successful results.

|

||||

let attestations = &join_all(signing_futures)

|

||||

.await

|

||||

.into_iter()

|

||||

.flatten()

|

||||

.collect::<Vec<Attestation<E>>>();

|

||||

|

||||

// Post the attestations to the BN.

|

||||

match self

|

||||

.beacon_nodes

|

||||

.first_success(RequireSynced::No, |beacon_node| async move {

|

||||

@@ -405,7 +419,7 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

&[metrics::ATTESTATIONS_HTTP_POST],

|

||||

);

|

||||

beacon_node

|

||||

.post_beacon_pool_attestations(attestations_slice)

|

||||

.post_beacon_pool_attestations(attestations)

|

||||

.await

|

||||

})

|

||||

.await

|

||||

@@ -447,13 +461,12 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

/// returned to the BN.

|

||||

async fn produce_and_publish_aggregates(

|

||||

&self,

|

||||

attestation_data: AttestationData,

|

||||

attestation_data: &AttestationData,

|

||||

validator_duties: &[DutyAndProof],

|

||||

) -> Result<(), String> {

|

||||

let log = self.context.log();

|

||||

|

||||

let attestation_data_ref = &attestation_data;

|

||||

let aggregated_attestation = self

|

||||

let aggregated_attestation = &self

|

||||

.beacon_nodes

|

||||

.first_success(RequireSynced::No, |beacon_node| async move {

|

||||

let _timer = metrics::start_timer_vec(

|

||||

@@ -462,55 +475,59 @@ impl<T: SlotClock + 'static, E: EthSpec> AttestationService<T, E> {

|

||||

);

|

||||

beacon_node

|

||||

.get_validator_aggregate_attestation(

|

||||

attestation_data_ref.slot,

|

||||

attestation_data_ref.tree_hash_root(),

|

||||

attestation_data.slot,

|

||||

attestation_data.tree_hash_root(),

|

||||

)

|

||||

.await

|

||||

.map_err(|e| format!("Failed to produce an aggregate attestation: {:?}", e))?

|

||||

.ok_or_else(|| format!("No aggregate available for {:?}", attestation_data_ref))

|

||||

.ok_or_else(|| format!("No aggregate available for {:?}", attestation_data))

|

||||

.map(|result| result.data)

|

||||

})

|

||||

.await

|

||||

.map_err(|e| e.to_string())?;

|

||||

|

||||

let mut signed_aggregate_and_proofs = Vec::new();

|

||||

|

||||

for duty_and_proof in validator_duties {

|

||||

// Create futures to produce the signed aggregated attestations.

|

||||

let signing_futures = validator_duties.iter().map(|duty_and_proof| async move {

|

||||

let duty = &duty_and_proof.duty;

|

||||

|

||||

let selection_proof = if let Some(proof) = duty_and_proof.selection_proof.as_ref() {

|

||||

proof

|

||||

} else {

|

||||

// Do not produce a signed aggregate for validators that are not

|

||||

// subscribed aggregators.

|

||||

continue;

|

||||

};

|

||||

let selection_proof = duty_and_proof.selection_proof.as_ref()?;

|

||||

|

||||

let slot = attestation_data.slot;

|

||||

let committee_index = attestation_data.index;

|

||||

|

||||

if duty.slot != slot || duty.committee_index != committee_index {

|

||||

crit!(log, "Inconsistent validator duties during signing");

|

||||

continue;

|

||||

return None;

|

||||

}

|

||||

|

||||

match self.validator_store.produce_signed_aggregate_and_proof(

|

||||

duty.pubkey,

|

||||

duty.validator_index,

|

||||

aggregated_attestation.clone(),

|

||||

selection_proof.clone(),

|

||||

) {

|

||||

Ok(aggregate) => signed_aggregate_and_proofs.push(aggregate),

|

||||

match self

|

||||

.validator_store

|

||||

.produce_signed_aggregate_and_proof(

|

||||

duty.pubkey,

|

||||

duty.validator_index,

|

||||

aggregated_attestation.clone(),

|

||||

selection_proof.clone(),

|

||||

)

|

||||

.await

|

||||

{

|

||||

Ok(aggregate) => Some(aggregate),

|

||||

Err(e) => {

|

||||

crit!(

|

||||

log,

|

||||

"Failed to sign attestation";

|

||||

"error" => ?e

|

||||

"error" => ?e,

|

||||

"pubkey" => ?duty.pubkey,

|

||||

);

|

||||

continue;

|

||||

None

|

||||

}

|

||||

}

|

||||

}

|

||||

});

|

||||

|

||||

// Execute all the futures in parallel, collecting any successful results.

|

||||

let signed_aggregate_and_proofs = join_all(signing_futures)

|

||||

.await

|

||||

.into_iter()

|

||||

.flatten()

|

||||

.collect::<Vec<_>>();

|

||||

|

||||

if !signed_aggregate_and_proofs.is_empty() {

|

||||

let signed_aggregate_and_proofs_slice = signed_aggregate_and_proofs.as_slice();

|

||||

|

||||

@@ -240,6 +240,7 @@ impl<T: SlotClock + 'static, E: EthSpec> BlockService<T, E> {

|

||||

let randao_reveal = self

|

||||

.validator_store

|

||||

.randao_reveal(validator_pubkey, slot.epoch(E::slots_per_epoch()))

|

||||

.await

|

||||

.map_err(|e| format!("Unable to produce randao reveal signature: {:?}", e))?

|

||||

.into();

|

||||

|

||||

@@ -276,6 +277,7 @@ impl<T: SlotClock + 'static, E: EthSpec> BlockService<T, E> {

|

||||

let signed_block = self_ref

|

||||

.validator_store

|

||||

.sign_block(*validator_pubkey_ref, block, current_slot)

|

||||

.await

|

||||

.map_err(|e| format!("Unable to sign block: {:?}", e))?;

|

||||

|

||||

let _post_timer = metrics::start_timer_vec(

|

||||

|

||||

@@ -16,6 +16,7 @@ use crate::{

|

||||

};

|

||||

use environment::RuntimeContext;

|

||||

use eth2::types::{AttesterData, BeaconCommitteeSubscription, ProposerData, StateId, ValidatorId};

|

||||

use futures::future::join_all;

|

||||

use parking_lot::RwLock;

|

||||

use safe_arith::ArithError;

|

||||

use slog::{debug, error, info, warn, Logger};

|

||||

@@ -64,13 +65,14 @@ pub struct DutyAndProof {

|

||||

|

||||

impl DutyAndProof {

|

||||

/// Instantiate `Self`, computing the selection proof as well.

|

||||

pub fn new<T: SlotClock + 'static, E: EthSpec>(

|

||||

pub async fn new<T: SlotClock + 'static, E: EthSpec>(

|

||||

duty: AttesterData,

|

||||

validator_store: &ValidatorStore<T, E>,

|

||||

spec: &ChainSpec,

|

||||

) -> Result<Self, Error> {

|

||||

let selection_proof = validator_store

|

||||

.produce_selection_proof(duty.pubkey, duty.slot)

|

||||

.await

|

||||

.map_err(Error::FailedToProduceSelectionProof)?;

|

||||

|

||||

let selection_proof = selection_proof

|

||||

@@ -637,56 +639,77 @@ async fn poll_beacon_attesters_for_epoch<T: SlotClock + 'static, E: EthSpec>(

|

||||

|

||||

let dependent_root = response.dependent_root;

|

||||

|

||||

let relevant_duties = response

|

||||

.data

|

||||

.into_iter()

|

||||

.filter(|attester_duty| local_pubkeys.contains(&attester_duty.pubkey))

|

||||

.collect::<Vec<_>>();

|

||||

// Filter any duties that are not relevant or already known.

|

||||

let new_duties = {

|

||||

// Avoid holding the read-lock for any longer than required.

|

||||

let attesters = duties_service.attesters.read();

|

||||

response

|

||||

.data

|

||||

.into_iter()

|

||||

.filter(|duty| local_pubkeys.contains(&duty.pubkey))

|

||||

.filter(|duty| {

|

||||

// Only update the duties if either is true:

|

||||

//

|

||||

// - There were no known duties for this epoch.

|

||||

// - The dependent root has changed, signalling a re-org.

|

||||

attesters.get(&duty.pubkey).map_or(true, |duties| {

|

||||

duties

|

||||

.get(&epoch)

|

||||

.map_or(true, |(prior, _)| *prior != dependent_root)

|

||||

})

|

||||

})

|

||||

.collect::<Vec<_>>()

|

||||

};

|

||||

|

||||

debug!(

|

||||

log,

|

||||

"Downloaded attester duties";

|

||||

"dependent_root" => %dependent_root,

|

||||

"num_relevant_duties" => relevant_duties.len(),

|

||||

"num_new_duties" => new_duties.len(),

|

||||

);

|

||||

|

||||

// Produce the `DutyAndProof` messages in parallel.

|

||||

let duty_and_proof_results = join_all(new_duties.into_iter().map(|duty| {

|

||||

DutyAndProof::new(duty, &duties_service.validator_store, &duties_service.spec)

|

||||

}))

|

||||

.await;

|

||||

|

||||

// Update the duties service with the new `DutyAndProof` messages.

|

||||

let mut attesters = duties_service.attesters.write();

|

||||

let mut already_warned = Some(());

|

||||

let mut attesters_map = duties_service.attesters.write();

|

||||

for duty in relevant_duties {

|

||||

let attesters_map = attesters_map.entry(duty.pubkey).or_default();

|

||||

for result in duty_and_proof_results {

|

||||

let duty_and_proof = match result {

|

||||

Ok(duty_and_proof) => duty_and_proof,

|

||||

Err(e) => {

|

||||

error!(

|

||||

log,

|

||||

"Failed to produce duty and proof";

|

||||

"error" => ?e,

|

||||

"msg" => "may impair attestation duties"

|

||||

);

|

||||

// Do not abort the entire batch for a single failure.

|

||||

continue;

|

||||

}

|

||||

};

|

||||

|

||||

// Only update the duties if either is true:

|

||||

//

|

||||

// - There were no known duties for this epoch.

|

||||

// - The dependent root has changed, signalling a re-org.

|

||||

if attesters_map

|

||||

.get(&epoch)

|

||||

.map_or(true, |(prior, _)| *prior != dependent_root)

|

||||

let attester_map = attesters.entry(duty_and_proof.duty.pubkey).or_default();

|

||||

|

||||

if let Some((prior_dependent_root, _)) =

|

||||

attester_map.insert(epoch, (dependent_root, duty_and_proof))

|

||||

{

|

||||

let duty_and_proof =

|

||||

DutyAndProof::new(duty, &duties_service.validator_store, &duties_service.spec)?;

|

||||

|

||||

if let Some((prior_dependent_root, _)) =

|

||||

attesters_map.insert(epoch, (dependent_root, duty_and_proof))

|

||||

{

|

||||

// Using `already_warned` avoids excessive logs.

|

||||

if dependent_root != prior_dependent_root && already_warned.take().is_some() {

|

||||

warn!(

|

||||

log,

|

||||

"Attester duties re-org";

|

||||

"prior_dependent_root" => %prior_dependent_root,

|

||||

"dependent_root" => %dependent_root,

|

||||

"msg" => "this may happen from time to time"

|

||||

)

|

||||

}

|

||||

// Using `already_warned` avoids excessive logs.

|

||||

if dependent_root != prior_dependent_root && already_warned.take().is_some() {

|

||||

warn!(

|

||||

log,

|

||||

"Attester duties re-org";

|

||||

"prior_dependent_root" => %prior_dependent_root,

|

||||

"dependent_root" => %dependent_root,

|

||||

"msg" => "this may happen from time to time"

|

||||

)

|

||||

}

|

||||

}

|

||||

}

|

||||

// Drop the write-lock.

|

||||

//

|

||||

// This is strictly unnecessary since the function ends immediately afterwards, but we remain

|

||||

// defensive regardless.

|

||||

drop(attesters_map);

|

||||

drop(attesters);

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

@@ -2,6 +2,7 @@ use crate::{

|

||||

doppelganger_service::DoppelgangerStatus,

|

||||

duties_service::{DutiesService, Error},

|

||||

};

|

||||

use futures::future::join_all;

|

||||

use itertools::Itertools;

|

||||

use parking_lot::{MappedRwLockReadGuard, RwLock, RwLockReadGuard, RwLockWriteGuard};

|

||||

use slog::{crit, debug, info, warn};

|

||||

@@ -330,8 +331,8 @@ pub async fn poll_sync_committee_duties<T: SlotClock + 'static, E: EthSpec>(

|

||||

|

||||

if !new_pre_compute_duties.is_empty() {

|

||||

let sub_duties_service = duties_service.clone();

|

||||

duties_service.context.executor.spawn_blocking(

|

||||

move || {

|

||||

duties_service.context.executor.spawn(

|

||||

async move {

|

||||

fill_in_aggregation_proofs(

|

||||

sub_duties_service,

|

||||

&new_pre_compute_duties,

|

||||

@@ -339,6 +340,7 @@ pub async fn poll_sync_committee_duties<T: SlotClock + 'static, E: EthSpec>(

|

||||

current_epoch,

|

||||

current_pre_compute_epoch,

|

||||

)

|

||||

.await

|

||||

},

|

||||

"duties_service_sync_selection_proofs",

|

||||

);

|

||||

@@ -370,8 +372,8 @@ pub async fn poll_sync_committee_duties<T: SlotClock + 'static, E: EthSpec>(

|

||||

|

||||

if !new_pre_compute_duties.is_empty() {

|

||||

let sub_duties_service = duties_service.clone();

|

||||

duties_service.context.executor.spawn_blocking(

|

||||

move || {

|

||||

duties_service.context.executor.spawn(

|

||||

async move {

|

||||

fill_in_aggregation_proofs(

|

||||

sub_duties_service,

|

||||

&new_pre_compute_duties,

|

||||

@@ -379,6 +381,7 @@ pub async fn poll_sync_committee_duties<T: SlotClock + 'static, E: EthSpec>(

|

||||

current_epoch,

|

||||

pre_compute_epoch,

|

||||

)

|

||||

.await

|

||||

},

|

||||

"duties_service_sync_selection_proofs",

|

||||

);

|

||||

@@ -468,7 +471,7 @@ pub async fn poll_sync_committee_duties_for_period<T: SlotClock + 'static, E: Et

|

||||

Ok(())

|

||||

}

|

||||

|

||||

pub fn fill_in_aggregation_proofs<T: SlotClock + 'static, E: EthSpec>(

|

||||

pub async fn fill_in_aggregation_proofs<T: SlotClock + 'static, E: EthSpec>(

|

||||

duties_service: Arc<DutiesService<T, E>>,

|

||||

pre_compute_duties: &[(Epoch, SyncDuty)],

|

||||

sync_committee_period: u64,

|

||||

@@ -487,60 +490,54 @@ pub fn fill_in_aggregation_proofs<T: SlotClock + 'static, E: EthSpec>(

|

||||

|

||||

// Generate selection proofs for each validator at each slot, one epoch at a time.

|

||||

for epoch in (current_epoch.as_u64()..=pre_compute_epoch.as_u64()).map(Epoch::new) {

|

||||

// Generate proofs.

|

||||

let validator_proofs: Vec<(u64, Vec<_>)> = pre_compute_duties

|

||||

.iter()

|

||||

.filter_map(|(validator_start_epoch, duty)| {

|

||||

// Proofs are already known at this epoch for this validator.

|

||||

if epoch < *validator_start_epoch {

|

||||

return None;

|

||||

let mut validator_proofs = vec![];

|

||||

for (validator_start_epoch, duty) in pre_compute_duties {

|

||||

// Proofs are already known at this epoch for this validator.

|

||||

if epoch < *validator_start_epoch {

|

||||

continue;

|

||||

}

|

||||

|

||||

let subnet_ids = match duty.subnet_ids::<E>() {

|

||||

Ok(subnet_ids) => subnet_ids,

|

||||

Err(e) => {

|

||||

crit!(

|

||||

log,

|

||||

"Arithmetic error computing subnet IDs";

|

||||

"error" => ?e,

|

||||

);

|

||||

continue;

|

||||

}

|

||||

};

|

||||

|

||||

let subnet_ids = duty

|

||||

.subnet_ids::<E>()

|

||||

.map_err(|e| {

|

||||

crit!(

|

||||

log,

|

||||

"Arithmetic error computing subnet IDs";

|

||||

"error" => ?e,

|

||||

);

|

||||

})

|

||||

.ok()?;

|

||||

// Create futures to produce proofs.

|

||||

let duties_service_ref = &duties_service;

|

||||

let futures = epoch

|

||||

.slot_iter(E::slots_per_epoch())

|

||||

.cartesian_product(&subnet_ids)

|

||||

.map(|(duty_slot, subnet_id)| async move {

|

||||

// Construct proof for prior slot.

|

||||

let slot = duty_slot - 1;

|

||||

|

||||

let proofs = epoch

|

||||

.slot_iter(E::slots_per_epoch())

|

||||

.cartesian_product(&subnet_ids)

|

||||

.filter_map(|(duty_slot, &subnet_id)| {

|

||||

// Construct proof for prior slot.

|

||||

let slot = duty_slot - 1;

|

||||

let proof = match duties_service_ref

|

||||

.validator_store

|

||||

.produce_sync_selection_proof(&duty.pubkey, slot, *subnet_id)

|

||||

.await

|

||||

{

|

||||

Ok(proof) => proof,

|

||||

Err(e) => {

|

||||

warn!(

|

||||

log,

|

||||

"Unable to sign selection proof";

|

||||

"error" => ?e,

|

||||

"pubkey" => ?duty.pubkey,

|

||||

"slot" => slot,

|

||||

);

|

||||

return None;

|

||||

}

|

||||

};

|

||||

|

||||

let proof = duties_service

|

||||

.validator_store

|

||||

.produce_sync_selection_proof(&duty.pubkey, slot, subnet_id)

|

||||

.map_err(|_| {

|

||||

warn!(

|

||||

log,

|

||||

"Pubkey missing when signing selection proof";

|

||||

"pubkey" => ?duty.pubkey,

|

||||

"slot" => slot,

|

||||

);

|

||||

})

|

||||

.ok()?;

|

||||

|

||||

let is_aggregator = proof

|

||||

.is_aggregator::<E>()

|

||||

.map_err(|e| {

|

||||

warn!(

|

||||

log,

|

||||

"Error determining is_aggregator";

|

||||

"pubkey" => ?duty.pubkey,

|

||||

"slot" => slot,

|

||||

"error" => ?e,

|

||||

);

|

||||

})

|

||||

.ok()?;

|

||||

|

||||

if is_aggregator {

|

||||

match proof.is_aggregator::<E>() {

|

||||

Ok(true) => {

|

||||

debug!(

|

||||

log,

|

||||

"Validator is sync aggregator";

|

||||

@@ -548,16 +545,31 @@ pub fn fill_in_aggregation_proofs<T: SlotClock + 'static, E: EthSpec>(

|

||||

"slot" => slot,

|

||||

"subnet_id" => %subnet_id,

|

||||

);

|

||||

Some(((slot, subnet_id), proof))

|

||||

} else {

|

||||

Some(((slot, *subnet_id), proof))

|

||||

}

|

||||

Ok(false) => None,

|

||||

Err(e) => {

|

||||

warn!(

|

||||

log,

|

||||

"Error determining is_aggregator";

|

||||

"pubkey" => ?duty.pubkey,

|

||||

"slot" => slot,

|

||||

"error" => ?e,

|

||||

);

|

||||

None

|

||||

}

|

||||

})

|

||||

.collect();

|

||||

}

|

||||

});

|

||||

|

||||

Some((duty.validator_index, proofs))

|

||||

})

|

||||

.collect();

|

||||

// Execute all the futures in parallel, collecting any successful results.

|

||||

let proofs = join_all(futures)

|

||||

.await

|

||||

.into_iter()

|

||||

.flatten()

|

||||

.collect::<Vec<_>>();

|

||||

|

||||

validator_proofs.push((duty.validator_index, proofs));

|

||||

}

|

||||

|

||||

// Add to global storage (we add regularly so the proofs can be used ASAP).

|

||||

let sync_map = duties_service.sync_duties.committees.read();

|

||||

|

||||

@@ -1,4 +1,5 @@

|

||||

use crate::ValidatorStore;

|

||||

use account_utils::validator_definitions::{SigningDefinition, ValidatorDefinition};

|

||||

use account_utils::{

|

||||

eth2_wallet::{bip39::Mnemonic, WalletBuilder},

|

||||

random_mnemonic, random_password, ZeroizeString,

|

||||

@@ -21,7 +22,7 @@ use validator_dir::Builder as ValidatorDirBuilder;

|

||||

///

|

||||

/// If `key_derivation_path_offset` is supplied then the EIP-2334 validator index will start at

|

||||

/// this point.

|

||||

pub async fn create_validators<P: AsRef<Path>, T: 'static + SlotClock, E: EthSpec>(

|

||||

pub async fn create_validators_mnemonic<P: AsRef<Path>, T: 'static + SlotClock, E: EthSpec>(

|

||||

mnemonic_opt: Option<Mnemonic>,

|

||||

key_derivation_path_offset: Option<u32>,

|

||||

validator_requests: &[api_types::ValidatorRequest],

|

||||

@@ -159,3 +160,33 @@ pub async fn create_validators<P: AsRef<Path>, T: 'static + SlotClock, E: EthSpe

|

||||

|

||||

Ok((validators, mnemonic))

|

||||

}

|

||||

|

||||

pub async fn create_validators_web3signer<T: 'static + SlotClock, E: EthSpec>(

|

||||

validator_requests: &[api_types::Web3SignerValidatorRequest],

|

||||

validator_store: &ValidatorStore<T, E>,

|

||||

) -> Result<(), warp::Rejection> {

|

||||

for request in validator_requests {

|

||||

let validator_definition = ValidatorDefinition {

|

||||

enabled: request.enable,

|

||||

voting_public_key: request.voting_public_key.clone(),

|

||||

graffiti: request.graffiti.clone(),

|

||||

description: request.description.clone(),

|

||||

signing_definition: SigningDefinition::Web3Signer {

|

||||

url: request.url.clone(),

|

||||

root_certificate_path: request.root_certificate_path.clone(),

|

||||

request_timeout_ms: request.request_timeout_ms,

|

||||

},

|

||||

};

|

||||

validator_store

|

||||

.add_validator(validator_definition)

|

||||

.await

|

||||

.map_err(|e| {

|

||||

warp_utils::reject::custom_server_error(format!(

|

||||

"failed to initialize validator: {:?}",

|

||||

e

|

||||

))

|

||||

})?;

|

||||

}

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

@@ -4,7 +4,7 @@ mod tests;

|

||||

|

||||

use crate::ValidatorStore;

|

||||

use account_utils::mnemonic_from_phrase;

|

||||

use create_validator::create_validators;

|

||||

use create_validator::{create_validators_mnemonic, create_validators_web3signer};

|

||||

use eth2::lighthouse_vc::types::{self as api_types, PublicKey, PublicKeyBytes};

|

||||

use lighthouse_version::version_with_platform;

|

||||

use serde::{Deserialize, Serialize};

|

||||

@@ -273,14 +273,15 @@ pub fn serve<T: 'static + SlotClock + Clone, E: EthSpec>(

|

||||

runtime: Weak<Runtime>| {

|

||||

blocking_signed_json_task(signer, move || {

|

||||

if let Some(runtime) = runtime.upgrade() {

|

||||

let (validators, mnemonic) = runtime.block_on(create_validators(

|

||||

None,

|

||||

None,

|

||||

&body,

|

||||

&validator_dir,

|

||||

&validator_store,

|

||||

&spec,

|

||||

))?;

|

||||

let (validators, mnemonic) =

|

||||

runtime.block_on(create_validators_mnemonic(

|

||||

None,

|

||||

None,

|

||||

&body,

|

||||

&validator_dir,

|

||||

&validator_store,

|

||||

&spec,

|

||||

))?;

|

||||

let response = api_types::PostValidatorsResponseData {

|

||||

mnemonic: mnemonic.into_phrase().into(),

|

||||

validators,

|

||||

@@ -322,14 +323,15 @@ pub fn serve<T: 'static + SlotClock + Clone, E: EthSpec>(

|

||||

e

|

||||

))

|

||||

})?;

|

||||

let (validators, _mnemonic) = runtime.block_on(create_validators(

|

||||

Some(mnemonic),

|

||||

Some(body.key_derivation_path_offset),

|

||||

&body.validators,

|

||||

&validator_dir,

|

||||

&validator_store,

|

||||

&spec,

|

||||

))?;

|

||||

let (validators, _mnemonic) =

|

||||

runtime.block_on(create_validators_mnemonic(

|

||||

Some(mnemonic),

|

||||

Some(body.key_derivation_path_offset),

|

||||

&body.validators,

|

||||

&validator_dir,

|

||||

&validator_store,

|

||||

&spec,

|

||||

))?;

|

||||

Ok(api_types::GenericResponse::from(validators))

|

||||

} else {

|

||||

Err(warp_utils::reject::custom_server_error(

|

||||

@@ -416,6 +418,33 @@ pub fn serve<T: 'static + SlotClock + Clone, E: EthSpec>(

|

||||

},

|

||||

);

|

||||

|

||||

// POST lighthouse/validators/web3signer

|

||||

let post_validators_web3signer = warp::path("lighthouse")

|

||||

.and(warp::path("validators"))

|

||||

.and(warp::path("web3signer"))

|

||||

.and(warp::path::end())

|

||||

.and(warp::body::json())

|

||||

.and(validator_store_filter.clone())

|

||||

.and(signer.clone())

|

||||

.and(runtime_filter.clone())

|

||||

.and_then(

|

||||

|body: Vec<api_types::Web3SignerValidatorRequest>,

|

||||

validator_store: Arc<ValidatorStore<T, E>>,

|

||||

signer,

|

||||

runtime: Weak<Runtime>| {

|

||||

blocking_signed_json_task(signer, move || {

|

||||

if let Some(runtime) = runtime.upgrade() {

|

||||

runtime.block_on(create_validators_web3signer(&body, &validator_store))?;

|

||||

Ok(())

|

||||

} else {

|

||||

Err(warp_utils::reject::custom_server_error(

|

||||

"Runtime shutdown".into(),

|

||||

))

|

||||

}

|

||||

})

|

||||

},

|

||||

);

|

||||

|

||||

// PATCH lighthouse/validators/{validator_pubkey}

|

||||

let patch_validators = warp::path("lighthouse")

|

||||

.and(warp::path("validators"))

|

||||

@@ -484,7 +513,8 @@ pub fn serve<T: 'static + SlotClock + Clone, E: EthSpec>(

|

||||

.or(warp::post().and(

|

||||

post_validators

|

||||

.or(post_validators_keystore)

|

||||

.or(post_validators_mnemonic),

|

||||

.or(post_validators_mnemonic)

|

||||

.or(post_validators_web3signer),

|

||||

))

|

||||

.or(warp::patch().and(patch_validators)),

|

||||

)

|

||||

|

||||

@@ -4,7 +4,8 @@

|

||||

use crate::doppelganger_service::DoppelgangerService;

|

||||

use crate::{

|

||||

http_api::{ApiSecret, Config as HttpConfig, Context},

|

||||

Config, InitializedValidators, ValidatorDefinitions, ValidatorStore,

|

||||

initialized_validators::InitializedValidators,

|

||||

Config, ValidatorDefinitions, ValidatorStore,

|

||||

};

|

||||

use account_utils::{

|

||||

eth2_wallet::WalletBuilder, mnemonic_from_phrase, random_mnemonic, random_password,

|

||||

@@ -27,6 +28,7 @@ use std::marker::PhantomData;

|

||||

use std::net::Ipv4Addr;

|

||||

use std::sync::Arc;

|

||||

use std::time::Duration;

|

||||

use task_executor::TaskExecutor;

|

||||

use tempfile::{tempdir, TempDir};

|

||||

use tokio::runtime::Runtime;

|

||||

use tokio::sync::oneshot;

|

||||

@@ -41,6 +43,7 @@ struct ApiTester {

|

||||

url: SensitiveUrl,

|

||||

_server_shutdown: oneshot::Sender<()>,

|

||||

_validator_dir: TempDir,

|

||||

_runtime_shutdown: exit_future::Signal,

|

||||

}

|

||||

|

||||

// Builds a runtime to be used in the testing configuration.

|

||||

@@ -85,6 +88,10 @@ impl ApiTester {

|

||||

let slot_clock =

|

||||

TestingSlotClock::new(Slot::new(0), Duration::from_secs(0), Duration::from_secs(1));

|

||||

|

||||

let (runtime_shutdown, exit) = exit_future::signal();

|

||||

let (shutdown_tx, _) = futures::channel::mpsc::channel(1);

|

||||

let executor = TaskExecutor::new(runtime.clone(), exit, log.clone(), shutdown_tx);

|

||||

|

||||

let validator_store = ValidatorStore::<_, E>::new(

|

||||

initialized_validators,

|

||||

slashing_protection,

|

||||

@@ -92,6 +99,7 @@ impl ApiTester {

|

||||

spec,

|

||||

Some(Arc::new(DoppelgangerService::new(log.clone()))),

|

||||

slot_clock,

|

||||

executor,

|

||||

log.clone(),

|

||||

);

|

||||

|

||||

@@ -141,6 +149,7 @@ impl ApiTester {

|

||||

client,

|

||||

url,

|

||||

_server_shutdown: shutdown_tx,

|

||||

_runtime_shutdown: runtime_shutdown,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -425,6 +434,40 @@ impl ApiTester {

|

||||

self

|

||||

}

|

||||

|

||||

pub async fn create_web3signer_validators(self, s: Web3SignerValidatorScenario) -> Self {

|

||||

let initial_vals = self.vals_total();

|

||||

let initial_enabled_vals = self.vals_enabled();

|

||||

|

||||

let request: Vec<_> = (0..s.count)

|

||||

.map(|i| {

|

||||

let kp = Keypair::random();

|

||||

Web3SignerValidatorRequest {

|

||||

enable: s.enabled,

|

||||

description: format!("{}", i),

|

||||

graffiti: None,

|

||||

voting_public_key: kp.pk,

|

||||

url: format!("http://signer_{}.com/", i),

|

||||

root_certificate_path: None,

|

||||

request_timeout_ms: None,

|

||||

}

|

||||

})

|

||||

.collect();

|

||||

|

||||

self.client

|

||||

.post_lighthouse_validators_web3signer(&request)

|

||||

.await

|

||||

.unwrap_err();

|

||||

|

||||

assert_eq!(self.vals_total(), initial_vals + s.count);

|

||||

if s.enabled {

|

||||

assert_eq!(self.vals_enabled(), initial_enabled_vals + s.count);

|

||||

} else {

|

||||

assert_eq!(self.vals_enabled(), initial_enabled_vals);

|

||||

};

|

||||

|

||||

self

|

||||

}

|

||||

|

||||

pub async fn set_validator_enabled(self, index: usize, enabled: bool) -> Self {

|

||||

let validator = &self.client.get_lighthouse_validators().await.unwrap().data[index];

|

||||

|

||||

@@ -480,6 +523,11 @@ struct KeystoreValidatorScenario {

|

||||

correct_password: bool,

|

||||

}

|

||||

|

||||

struct Web3SignerValidatorScenario {

|

||||

count: usize,

|

||||

enabled: bool,

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn invalid_pubkey() {

|

||||

let runtime = build_runtime();

|

||||

@@ -677,3 +725,22 @@ fn keystore_validator_creation() {

|

||||

.assert_validators_count(2);

|

||||

});

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn web3signer_validator_creation() {

|

||||

let runtime = build_runtime();

|

||||

let weak_runtime = Arc::downgrade(&runtime);

|

||||

runtime.block_on(async {

|

||||

ApiTester::new(weak_runtime)

|

||||

.await

|

||||

.assert_enabled_validators_count(0)

|

||||

.assert_validators_count(0)

|

||||

.create_web3signer_validators(Web3SignerValidatorScenario {

|

||||

count: 1,

|

||||

enabled: true,

|

||||

})

|

||||

.await

|

||||

.assert_enabled_validators_count(1)

|

||||

.assert_validators_count(1);

|

||||

});

|

||||

}

|

||||

|

||||

@@ -30,6 +30,8 @@ pub const VALIDATOR_ID_HTTP_GET: &str = "validator_id_http_get";

|

||||

pub const SUBSCRIPTIONS_HTTP_POST: &str = "subscriptions_http_post";

|

||||

pub const UPDATE_PROPOSERS: &str = "update_proposers";

|

||||

pub const SUBSCRIPTIONS: &str = "subscriptions";

|

||||

pub const LOCAL_KEYSTORE: &str = "local_keystore";

|

||||

pub const WEB3SIGNER: &str = "web3signer";

|

||||

|

||||

pub use lighthouse_metrics::*;

|

||||

|

||||

@@ -138,6 +140,14 @@ lazy_static::lazy_static! {

|

||||

"sync_eth2_fallback_connected",

|

||||

"Set to 1 if connected to atleast one synced eth2 fallback node, otherwise set to 0",

|

||||

);

|

||||

/*

|

||||

* Signing Metrics

|

||||

*/

|

||||

pub static ref SIGNING_TIMES: Result<HistogramVec> = try_create_histogram_vec(

|

||||

"vc_signing_times_seconds",

|

||||

"Duration to obtain a signature",

|

||||

&["type"]

|

||||

);

|

||||

}

|

||||

|

||||

pub fn gather_prometheus_metrics<T: EthSpec>(

|

||||

|

||||

@@ -6,6 +6,7 @@

|

||||

//! The `InitializedValidators` struct in this file serves as the source-of-truth of which

|

||||

//! validators are managed by this validator client.

|

||||

|

||||

use crate::signing_method::SigningMethod;

|

||||

use account_utils::{

|

||||

read_password, read_password_from_user,

|

||||

validator_definitions::{

|

||||

@@ -16,16 +17,26 @@ use account_utils::{

|

||||

use eth2_keystore::Keystore;

|

||||

use lighthouse_metrics::set_gauge;

|

||||

use lockfile::{Lockfile, LockfileError};

|

||||

use reqwest::{Certificate, Client, Error as ReqwestError};

|

||||

use slog::{debug, error, info, warn, Logger};

|

||||

use std::collections::{HashMap, HashSet};

|

||||

use std::fs::File;

|

||||

use std::io;

|

||||

use std::io::{self, Read};

|

||||

use std::path::{Path, PathBuf};

|

||||

use std::sync::Arc;

|

||||

use std::time::Duration;

|

||||

use types::{Graffiti, Keypair, PublicKey, PublicKeyBytes};

|

||||

use url::{ParseError, Url};

|

||||

|

||||

use crate::key_cache;

|

||||

use crate::key_cache::KeyCache;

|

||||

|

||||

/// Default timeout for a request to a remote signer for a signature.

|

||||

///

|

||||

/// Set to 12 seconds since that's the duration of a slot. A remote signer that cannot sign within

|

||||

/// that time is outside the synchronous assumptions of Eth2.

|

||||

const DEFAULT_REMOTE_SIGNER_REQUEST_TIMEOUT: Duration = Duration::from_secs(12);

|

||||

|

||||

// Use TTY instead of stdin to capture passwords from users.

|

||||

const USE_STDIN: bool = false;

|

||||

|

||||

@@ -66,6 +77,12 @@ pub enum Error {

|

||||

ValidatorNotInitialized(PublicKey),

|

||||

/// Unable to read the slot clock.

|

||||

SlotClock,

|

||||

/// The URL for the remote signer cannot be parsed.

|

||||

InvalidWeb3SignerUrl(String),

|

||||

/// Unable to read the root certificate file for the remote signer.

|

||||

InvalidWeb3SignerRootCertificateFile(io::Error),

|

||||

InvalidWeb3SignerRootCertificate(ReqwestError),

|

||||

UnableToBuildWeb3SignerClient(ReqwestError),

|

||||

}

|

||||

|

||||

impl From<LockfileError> for Error {

|

||||

@@ -74,23 +91,9 @@ impl From<LockfileError> for Error {

|

||||

}

|

||||

}

|

||||

|

||||

/// A method used by a validator to sign messages.

|

||||

///

|

||||

/// Presently there is only a single variant, however we expect more variants to arise (e.g.,

|

||||

/// remote signing).

|

||||

pub enum SigningMethod {

|

||||

/// A validator that is defined by an EIP-2335 keystore on the local filesystem.

|

||||

LocalKeystore {

|

||||

voting_keystore_path: PathBuf,

|

||||

voting_keystore_lockfile: Lockfile,

|

||||

voting_keystore: Keystore,

|

||||

voting_keypair: Keypair,

|

||||

},

|

||||

}

|

||||

|

||||

/// A validator that is ready to sign messages.

|

||||

pub struct InitializedValidator {

|

||||

signing_method: SigningMethod,

|

||||

signing_method: Arc<SigningMethod>,

|

||||

graffiti: Option<Graffiti>,

|

||||

/// The validators index in `state.validators`, to be updated by an external service.

|

||||

index: Option<u64>,

|

||||

@@ -99,11 +102,13 @@ pub struct InitializedValidator {

|

||||

impl InitializedValidator {

|

||||

/// Return a reference to this validator's lockfile if it has one.

|

||||

pub fn keystore_lockfile(&self) -> Option<&Lockfile> {

|

||||

match self.signing_method {

|

||||

match self.signing_method.as_ref() {

|

||||

SigningMethod::LocalKeystore {

|

||||

ref voting_keystore_lockfile,

|

||||

..

|

||||

} => Some(voting_keystore_lockfile),

|

||||

// Web3Signer validators do not have any lockfiles.

|

||||

SigningMethod::Web3Signer { .. } => None,

|

||||

}

|

||||

}

|

||||

}

|

||||

@@ -138,7 +143,7 @@ impl InitializedValidator {

|

||||

return Err(Error::UnableToInitializeDisabledValidator);

|

||||

}

|

||||

|

||||

match def.signing_definition {

|

||||

let signing_method = match def.signing_definition {

|

||||

// Load the keystore, password, decrypt the keypair and create a lockfile for a

|

||||

// EIP-2335 keystore on the local filesystem.

|

||||

SigningDefinition::LocalKeystore {

|

||||

@@ -210,33 +215,77 @@ impl InitializedValidator {

|

||||

|

||||

let voting_keystore_lockfile = Lockfile::new(lockfile_path)?;

|

||||

|

||||

Ok(Self {

|

||||

signing_method: SigningMethod::LocalKeystore {

|

||||

voting_keystore_path,

|

||||

voting_keystore_lockfile,

|

||||

voting_keystore: voting_keystore.clone(),

|

||||

voting_keypair,

|

||||

},

|

||||

graffiti: def.graffiti.map(Into::into),

|

||||

index: None,

|

||||

})

|

||||

SigningMethod::LocalKeystore {

|

||||

voting_keystore_path,

|

||||

voting_keystore_lockfile,

|

||||

voting_keystore: voting_keystore.clone(),

|

||||

voting_keypair: Arc::new(voting_keypair),

|

||||

}

|

||||

}

|

||||

}

|

||||

SigningDefinition::Web3Signer {

|

||||

url,

|

||||

root_certificate_path,

|

||||

request_timeout_ms,

|

||||

} => {

|

||||

let signing_url = build_web3_signer_url(&url, &def.voting_public_key)

|

||||

.map_err(|e| Error::InvalidWeb3SignerUrl(e.to_string()))?;

|

||||

let request_timeout = request_timeout_ms

|

||||

.map(Duration::from_millis)

|

||||

.unwrap_or(DEFAULT_REMOTE_SIGNER_REQUEST_TIMEOUT);

|

||||

|

||||

let builder = Client::builder().timeout(request_timeout);

|

||||

|

||||

let builder = if let Some(path) = root_certificate_path {

|

||||

let certificate = load_pem_certificate(path)?;

|

||||

builder.add_root_certificate(certificate)

|

||||

} else {

|

||||

builder

|

||||

};

|

||||

|

||||

let http_client = builder

|

||||

.build()

|

||||

.map_err(Error::UnableToBuildWeb3SignerClient)?;

|

||||

|

||||

SigningMethod::Web3Signer {

|

||||

signing_url,

|

||||

http_client,

|

||||

voting_public_key: def.voting_public_key,

|

||||

}

|

||||

}

|

||||

};

|

||||

|

||||

Ok(Self {

|

||||

signing_method: Arc::new(signing_method),

|

||||

graffiti: def.graffiti.map(Into::into),

|

||||

index: None,

|

||||

})

|

||||

}

|

||||

|

||||

/// Returns the voting public key for this validator.

|

||||

pub fn voting_public_key(&self) -> &PublicKey {

|

||||

match &self.signing_method {

|

||||

match self.signing_method.as_ref() {

|

||||

SigningMethod::LocalKeystore { voting_keypair, .. } => &voting_keypair.pk,

|

||||

SigningMethod::Web3Signer {

|

||||

voting_public_key, ..

|

||||

} => voting_public_key,

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

/// Returns the voting keypair for this validator.

|

||||

pub fn voting_keypair(&self) -> &Keypair {

|

||||

match &self.signing_method {

|

||||

SigningMethod::LocalKeystore { voting_keypair, .. } => voting_keypair,

|

||||

}

|

||||

}

|

||||

pub fn load_pem_certificate<P: AsRef<Path>>(pem_path: P) -> Result<Certificate, Error> {

|

||||

let mut buf = Vec::new();

|

||||

File::open(&pem_path)

|

||||

.map_err(Error::InvalidWeb3SignerRootCertificateFile)?

|

||||

.read_to_end(&mut buf)

|

||||

.map_err(Error::InvalidWeb3SignerRootCertificateFile)?;

|

||||

Certificate::from_pem(&buf).map_err(Error::InvalidWeb3SignerRootCertificate)

|

||||

}

|

||||

|

||||

fn build_web3_signer_url(base_url: &str, voting_public_key: &PublicKey) -> Result<Url, ParseError> {

|

||||

Url::parse(base_url)?.join(&format!(

|

||||

"api/v1/eth2/sign/{}",

|

||||

voting_public_key.to_string()

|

||||

))

|

||||

}

|

||||

|

||||

/// Try to unlock `keystore` at `keystore_path` by prompting the user via `stdin`.

|

||||

@@ -325,12 +374,14 @@ impl InitializedValidators {

|

||||

self.validators.iter().map(|(pubkey, _)| pubkey)

|

||||

}

|

||||

|

||||

/// Returns the voting `Keypair` for a given voting `PublicKey`, if that validator is known to

|

||||

/// `self` **and** the validator is enabled.

|

||||

pub fn voting_keypair(&self, voting_public_key: &PublicKeyBytes) -> Option<&Keypair> {

|

||||

/// Returns the voting `Keypair` for a given voting `PublicKey`, if all are true:

|

||||

///

|

||||

/// - The validator is known to `self`.

|

||||

/// - The validator is enabled.

|

||||

pub fn signing_method(&self, voting_public_key: &PublicKeyBytes) -> Option<Arc<SigningMethod>> {

|

||||

self.validators

|

||||

.get(voting_public_key)

|

||||

.map(|v| v.voting_keypair())

|

||||

.map(|v| v.signing_method.clone())

|

||||

}

|

||||

|

||||

/// Add a validator definition to `self`, overwriting the on-disk representation of `self`.

|

||||

@@ -431,6 +482,8 @@ impl InitializedValidators {

|

||||

};

|

||||

definitions_map.insert(*key_store.uuid(), def);

|

||||

}

|

||||

// Remote signer validators don't interact with the key cache.

|

||||

SigningDefinition::Web3Signer { .. } => (),

|

||||

}

|

||||

}

|

||||

|

||||

@@ -451,13 +504,13 @@ impl InitializedValidators {

|

||||

let mut public_keys = Vec::new();

|

||||

for uuid in cache.uuids() {

|

||||

let def = definitions_map.get(uuid).expect("Existence checked before");

|

||||

let pw = match &def.signing_definition {

|

||||

match &def.signing_definition {

|

||||

SigningDefinition::LocalKeystore {

|

||||

voting_keystore_password_path,

|

||||

voting_keystore_password,

|

||||

voting_keystore_path,

|

||||

} => {

|

||||

if let Some(p) = voting_keystore_password {

|

||||

let pw = if let Some(p) = voting_keystore_password {

|

||||

p.as_ref().to_vec().into()

|

||||

} else if let Some(path) = voting_keystore_password_path {

|

||||

read_password(path).map_err(Error::UnableToReadVotingKeystorePassword)?

|

||||

@@ -468,11 +521,13 @@ impl InitializedValidators {

|

||||

.as_ref()

|

||||

.to_vec()

|

||||

.into()

|

||||

}

|

||||

};

|

||||

passwords.push(pw);

|

||||

public_keys.push(def.voting_public_key.clone());

|

||||

}

|

||||

// Remote signer validators don't interact with the key cache.

|

||||

SigningDefinition::Web3Signer { .. } => (),

|

||||

};

|

||||

passwords.push(pw);

|

||||

public_keys.push(def.voting_public_key.clone());

|

||||

}

|

||||

|

||||

//decrypt

|

||||

@@ -546,6 +601,7 @@ impl InitializedValidators {

|

||||

info!(

|

||||

self.log,

|

||||

"Enabled validator";

|

||||

"signing_method" => "local_keystore",

|

||||

"voting_pubkey" => format!("{:?}", def.voting_public_key),

|

||||

);

|

||||

|

||||

@@ -565,6 +621,40 @@ impl InitializedValidators {

|

||||

self.log,

|

||||

"Failed to initialize validator";

|

||||

"error" => format!("{:?}", e),

|

||||

"signing_method" => "local_keystore",

|

||||

"validator" => format!("{:?}", def.voting_public_key)

|

||||

);

|

||||

|

||||

// Exit on an invalid validator.

|

||||

return Err(e);

|

||||

}

|

||||

}

|

||||

}

|

||||

SigningDefinition::Web3Signer { .. } => {

|

||||

match InitializedValidator::from_definition(

|

||||

def.clone(),

|

||||

&mut key_cache,

|

||||

&mut key_stores,

|

||||

)

|

||||

.await

|

||||

{

|

||||

Ok(init) => {

|

||||

self.validators

|

||||

.insert(init.voting_public_key().compress(), init);

|

||||

|

||||

info!(

|

||||

self.log,

|

||||

"Enabled validator";

|

||||

"signing_method" => "remote_signer",

|

||||

"voting_pubkey" => format!("{:?}", def.voting_public_key),

|

||||

);

|

||||

}

|

||||

Err(e) => {

|

||||

error!(

|

||||

self.log,

|

||||

"Failed to initialize validator";

|

||||

"error" => format!("{:?}", e),

|

||||

"signing_method" => "remote_signer",

|

||||

"validator" => format!("{:?}", def.voting_public_key)

|

||||

);

|

||||

|

||||

@@ -585,6 +675,8 @@ impl InitializedValidators {

|

||||

disabled_uuids.insert(*key_store.uuid());

|

||||

}

|

||||

}

|

||||

// Remote signers do not interact with the key cache.

|

||||

SigningDefinition::Web3Signer { .. } => (),

|

||||

}

|

||||

|

||||

info!(

|

||||

|

||||

@@ -7,19 +7,22 @@ mod config;

|

||||

mod duties_service;

|

||||

mod graffiti_file;

|

||||

mod http_metrics;

|

||||

mod initialized_validators;

|

||||

mod key_cache;

|

||||

mod notifier;

|

||||

mod signing_method;

|

||||

mod sync_committee_service;

|

||||

mod validator_store;

|

||||

|

||||

mod doppelganger_service;

|

||||

pub mod http_api;

|

||||

pub mod initialized_validators;

|

||||

pub mod validator_store;

|

||||

|

||||

pub use cli::cli_app;

|

||||

pub use config::Config;

|

||||

use initialized_validators::InitializedValidators;

|

||||

use lighthouse_metrics::set_gauge;

|

||||

use monitoring_api::{MonitoringHttpClient, ProcessType};

|

||||

pub use slashing_protection::{SlashingDatabase, SLASHING_PROTECTION_FILENAME};

|

||||

|

||||

use crate::beacon_node_fallback::{

|

||||

start_fallback_updater_service, BeaconNodeFallback, CandidateBeaconNode, RequireSynced,

|

||||

@@ -33,10 +36,8 @@ use duties_service::DutiesService;

|

||||

use environment::RuntimeContext;

|

||||

use eth2::{reqwest::ClientBuilder, BeaconNodeHttpClient, StatusCode, Timeouts};

|

||||

use http_api::ApiSecret;

|

||||

use initialized_validators::InitializedValidators;

|

||||

use notifier::spawn_notifier;

|

||||

use parking_lot::RwLock;

|

||||

use slashing_protection::{SlashingDatabase, SLASHING_PROTECTION_FILENAME};

|

||||

use slog::{error, info, warn, Logger};

|

||||

use slot_clock::SlotClock;

|

||||

use slot_clock::SystemTimeSlotClock;

|

||||

@@ -332,6 +333,7 @@ impl<T: EthSpec> ProductionValidatorClient<T> {

|

||||

context.eth2_config.spec.clone(),

|

||||

doppelganger_service.clone(),

|

||||

slot_clock.clone(),

|

||||

context.executor.clone(),

|

||||

log.clone(),

|

||||

));

|

||||

|

||||

|

||||

222

validator_client/src/signing_method.rs

Normal file

222

validator_client/src/signing_method.rs

Normal file

@@ -0,0 +1,222 @@

|

||||

//! Provides methods for obtaining validator signatures, including:

|

||||

//!

|

||||

//! - Via a local `Keypair`.

|

||||

//! - Via a remote signer (Web3Signer)

|

||||

|

||||

use crate::http_metrics::metrics;

|

||||

use eth2_keystore::Keystore;

|

||||

use lockfile::Lockfile;

|

||||

use reqwest::Client;

|

||||

use std::path::PathBuf;

|

||||

use std::sync::Arc;

|

||||

use task_executor::TaskExecutor;

|

||||

use types::*;

|

||||

use url::Url;

|

||||

use web3signer::{ForkInfo, SigningRequest, SigningResponse};

|

||||

|

||||

pub use web3signer::Web3SignerObject;

|

||||

|

||||

mod web3signer;

|

||||

|

||||

#[derive(Debug, PartialEq)]

|

||||

pub enum Error {

|

||||

InconsistentDomains {

|

||||

message_type_domain: Domain,

|

||||

domain: Domain,

|

||||

},

|

||||

Web3SignerRequestFailed(String),

|

||||

Web3SignerJsonParsingFailed(String),

|

||||

ShuttingDown,

|

||||

TokioJoin(String),

|

||||

}

|

||||

|

||||

/// Enumerates all messages that can be signed by a validator.

|

||||

pub enum SignableMessage<'a, T: EthSpec> {

|

||||

RandaoReveal(Epoch),

|

||||

BeaconBlock(&'a BeaconBlock<T>),

|

||||

AttestationData(&'a AttestationData),

|

||||

SignedAggregateAndProof(&'a AggregateAndProof<T>),

|

||||

SelectionProof(Slot),

|

||||

SyncSelectionProof(&'a SyncAggregatorSelectionData),

|

||||

SyncCommitteeSignature {

|

||||

beacon_block_root: Hash256,

|

||||

slot: Slot,

|

||||

},

|

||||

SignedContributionAndProof(&'a ContributionAndProof<T>),

|

||||

}

|

||||

|

||||

impl<'a, T: EthSpec> SignableMessage<'a, T> {

|

||||

/// Returns the `SignedRoot` for the contained message.

|

||||

///

|

||||

/// The actual `SignedRoot` trait is not used since it also requires a `TreeHash` impl, which is

|

||||

/// not required here.

|

||||

pub fn signing_root(&self, domain: Hash256) -> Hash256 {

|

||||

match self {

|

||||

SignableMessage::RandaoReveal(epoch) => epoch.signing_root(domain),

|

||||

SignableMessage::BeaconBlock(b) => b.signing_root(domain),

|

||||

SignableMessage::AttestationData(a) => a.signing_root(domain),

|

||||

SignableMessage::SignedAggregateAndProof(a) => a.signing_root(domain),

|

||||

SignableMessage::SelectionProof(slot) => slot.signing_root(domain),

|

||||

SignableMessage::SyncSelectionProof(s) => s.signing_root(domain),

|

||||

SignableMessage::SyncCommitteeSignature {

|

||||

beacon_block_root, ..

|

||||

} => beacon_block_root.signing_root(domain),

|

||||

SignableMessage::SignedContributionAndProof(c) => c.signing_root(domain),

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

/// A method used by a validator to sign messages.

|

||||

///

|

||||

/// Presently there is only a single variant, however we expect more variants to arise (e.g.,

|

||||

/// remote signing).

|

||||

pub enum SigningMethod {

|

||||

/// A validator that is defined by an EIP-2335 keystore on the local filesystem.

|

||||

LocalKeystore {

|

||||

voting_keystore_path: PathBuf,

|

||||

voting_keystore_lockfile: Lockfile,

|

||||

voting_keystore: Keystore,

|

||||

voting_keypair: Arc<Keypair>,

|

||||

},

|

||||

/// A validator that defers to a Web3Signer server for signing.

|

||||

///

|

||||

/// See: https://docs.web3signer.consensys.net/en/latest/

|

||||

Web3Signer {

|

||||

signing_url: Url,

|

||||

http_client: Client,

|

||||

voting_public_key: PublicKey,

|

||||

},

|

||||

}

|

||||

|

||||

/// The additional information used to construct a signature. Mostly used for protection from replay

|

||||

/// attacks.

|

||||

pub struct SigningContext {

|

||||

pub domain: Domain,

|

||||

pub epoch: Epoch,

|

||||

pub fork: Fork,

|

||||

pub genesis_validators_root: Hash256,

|

||||

}

|

||||

|

||||

impl SigningContext {

|

||||

/// Returns the `Hash256` to be mixed-in with the signature.

|

||||

pub fn domain_hash(&self, spec: &ChainSpec) -> Hash256 {

|

||||

spec.get_domain(

|

||||

self.epoch,

|

||||

self.domain,

|

||||

&self.fork,

|

||||

self.genesis_validators_root,

|

||||

)

|

||||

}

|

||||

}

|

||||

|

||||

impl SigningMethod {

|

||||

/// Return the signature of `signable_message`, with respect to the `signing_context`.

|

||||

pub async fn get_signature<T: EthSpec>(

|

||||

&self,

|

||||

signable_message: SignableMessage<'_, T>,

|

||||

signing_context: SigningContext,

|

||||

spec: &ChainSpec,

|

||||

executor: &TaskExecutor,

|

||||

) -> Result<Signature, Error> {

|

||||

let domain_hash = signing_context.domain_hash(spec);

|

||||

let SigningContext {

|

||||

fork,

|

||||

genesis_validators_root,

|

||||

..

|

||||

} = signing_context;

|

||||

|

||||

let signing_root = signable_message.signing_root(domain_hash);

|

||||

|

||||

match self {

|

||||

SigningMethod::LocalKeystore { voting_keypair, .. } => {

|

||||

let _timer =

|

||||

metrics::start_timer_vec(&metrics::SIGNING_TIMES, &[metrics::LOCAL_KEYSTORE]);

|

||||

|

||||

let voting_keypair = voting_keypair.clone();

|

||||

// Spawn a blocking task to produce the signature. This avoids blocking the core

|

||||

// tokio executor.

|

||||

let signature = executor

|

||||

.spawn_blocking_handle(

|

||||

move || voting_keypair.sk.sign(signing_root),

|

||||

"local_keystore_signer",

|

||||

)

|

||||

.ok_or(Error::ShuttingDown)?

|

||||

.await

|

||||

.map_err(|e| Error::TokioJoin(e.to_string()))?;

|

||||

Ok(signature)

|

||||

}

|

||||

SigningMethod::Web3Signer {

|

||||

signing_url,

|

||||

http_client,

|

||||

..

|

||||

} => {

|

||||

let _timer =

|

||||

metrics::start_timer_vec(&metrics::SIGNING_TIMES, &[metrics::WEB3SIGNER]);

|

||||

|

||||

// Map the message into a Web3Signer type.

|

||||

let object = match signable_message {

|

||||

SignableMessage::RandaoReveal(epoch) => {

|

||||

Web3SignerObject::RandaoReveal { epoch }

|

||||

}

|

||||

SignableMessage::BeaconBlock(block) => Web3SignerObject::beacon_block(block),

|

||||

SignableMessage::AttestationData(a) => Web3SignerObject::Attestation(a),

|

||||

SignableMessage::SignedAggregateAndProof(a) => {

|

||||

Web3SignerObject::AggregateAndProof(a)

|

||||

}

|

||||

SignableMessage::SelectionProof(slot) => {

|

||||

Web3SignerObject::AggregationSlot { slot }

|

||||

}

|

||||

SignableMessage::SyncSelectionProof(s) => {

|

||||

Web3SignerObject::SyncAggregatorSelectionData(s)

|

||||

}

|

||||

SignableMessage::SyncCommitteeSignature {

|

||||

beacon_block_root,

|

||||

slot,

|

||||

} => Web3SignerObject::SyncCommitteeMessage {

|

||||

beacon_block_root,

|

||||

slot,

|

||||

},

|

||||

SignableMessage::SignedContributionAndProof(c) => {

|

||||

Web3SignerObject::ContributionAndProof(c)

|

||||

}

|

||||

};

|

||||

|

||||

// Determine the Web3Signer message type.

|

||||

let message_type = object.message_type();

|

||||

|

||||

// The `fork_info` field is not required for deposits since they sign across the

|

||||

// genesis fork version.

|

||||

let fork_info = if let Web3SignerObject::Deposit { .. } = &object {

|

||||

None

|

||||

} else {

|

||||