mirror of

https://github.com/sigp/lighthouse.git

synced 2026-05-31 13:17:09 +00:00

Deposit Cache Finalization & Fast WS Sync (#2915)

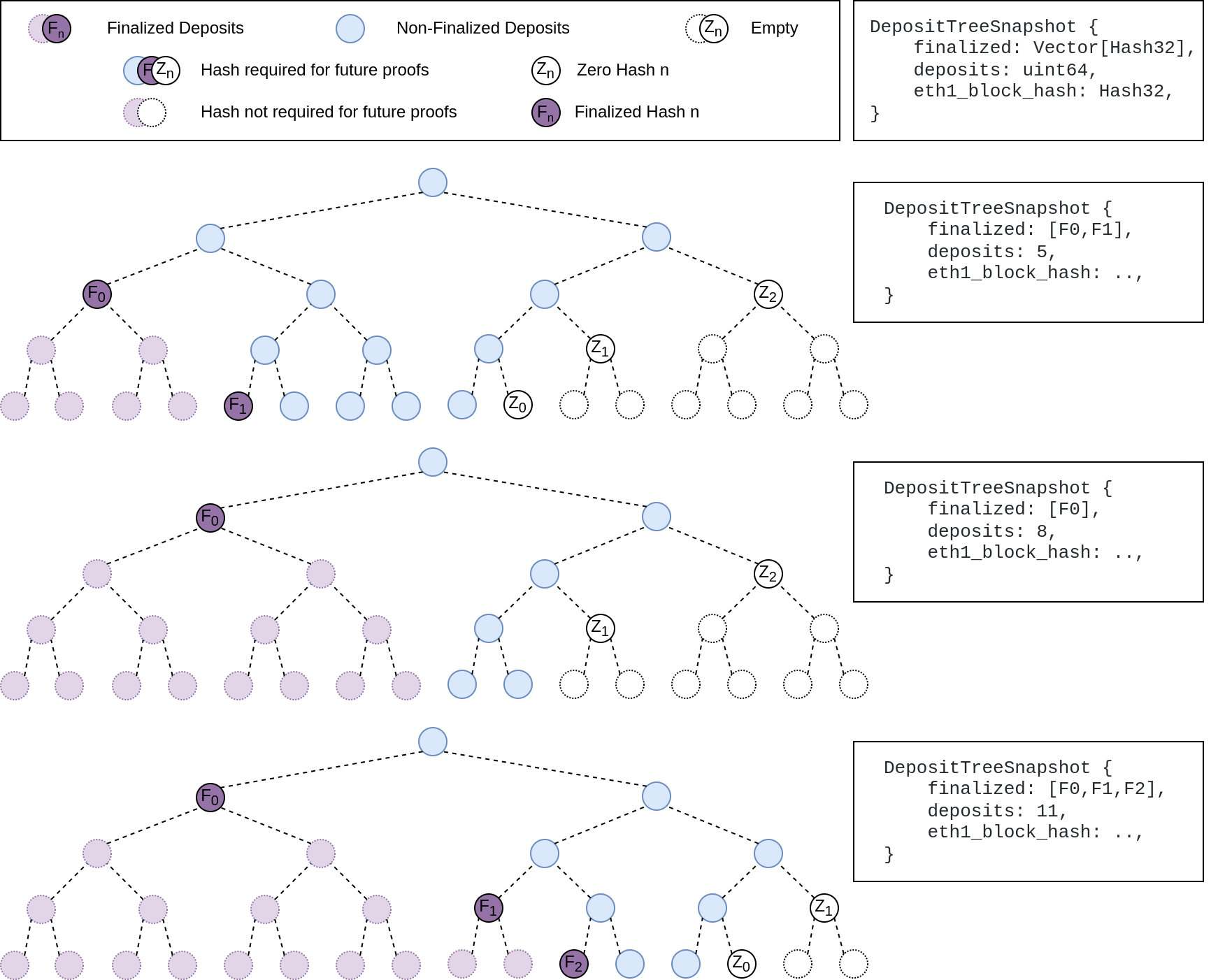

## Summary The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported. This has three benefits: 1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree. 2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree. 3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us. ## More Details Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`  ## Other Considerations I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions. Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

This commit is contained in:

@@ -1,7 +1,10 @@

|

||||

use ssz_derive::{Decode, Encode};

|

||||

use std::collections::HashMap;

|

||||

use std::ops::RangeInclusive;

|

||||

|

||||

pub use eth2::lighthouse::Eth1Block;

|

||||

use eth2::types::Hash256;

|

||||

use std::sync::Arc;

|

||||

|

||||

#[derive(Debug, PartialEq, Clone)]

|

||||

pub enum Error {

|

||||

@@ -20,7 +23,9 @@ pub enum Error {

|

||||

/// timestamp.

|

||||

#[derive(Debug, PartialEq, Clone, Default, Encode, Decode)]

|

||||

pub struct BlockCache {

|

||||

blocks: Vec<Eth1Block>,

|

||||

blocks: Vec<Arc<Eth1Block>>,

|

||||

#[ssz(skip_serializing, skip_deserializing)]

|

||||

by_hash: HashMap<Hash256, Arc<Eth1Block>>,

|

||||

}

|

||||

|

||||

impl BlockCache {

|

||||

@@ -36,12 +41,12 @@ impl BlockCache {

|

||||

|

||||

/// Returns the earliest (lowest timestamp) block, if any.

|

||||

pub fn earliest_block(&self) -> Option<&Eth1Block> {

|

||||

self.blocks.first()

|

||||

self.blocks.first().map(|ptr| ptr.as_ref())

|

||||

}

|

||||

|

||||

/// Returns the latest (highest timestamp) block, if any.

|

||||

pub fn latest_block(&self) -> Option<&Eth1Block> {

|

||||

self.blocks.last()

|

||||

self.blocks.last().map(|ptr| ptr.as_ref())

|

||||

}

|

||||

|

||||

/// Returns the timestamp of the earliest block in the cache (if any).

|

||||

@@ -71,7 +76,7 @@ impl BlockCache {

|

||||

/// - Monotonically increasing block numbers.

|

||||

/// - Non-uniformly increasing block timestamps.

|

||||

pub fn iter(&self) -> impl DoubleEndedIterator<Item = &Eth1Block> + Clone {

|

||||

self.blocks.iter()

|

||||

self.blocks.iter().map(|ptr| ptr.as_ref())

|

||||

}

|

||||

|

||||

/// Shortens the cache, keeping the latest (by block number) `len` blocks while dropping the

|

||||

@@ -80,7 +85,11 @@ impl BlockCache {

|

||||

/// If `len` is greater than the vector's current length, this has no effect.

|

||||

pub fn truncate(&mut self, len: usize) {

|

||||

if len < self.blocks.len() {

|

||||

self.blocks = self.blocks.split_off(self.blocks.len() - len);

|

||||

let remaining = self.blocks.split_off(self.blocks.len() - len);

|

||||

for block in &self.blocks {

|

||||

self.by_hash.remove(&block.hash);

|

||||

}

|

||||

self.blocks = remaining;

|

||||

}

|

||||

}

|

||||

|

||||

@@ -92,12 +101,27 @@ impl BlockCache {

|

||||

|

||||

/// Returns a block with the corresponding number, if any.

|

||||

pub fn block_by_number(&self, block_number: u64) -> Option<&Eth1Block> {

|

||||

self.blocks.get(

|

||||

self.blocks

|

||||

.as_slice()

|

||||

.binary_search_by(|block| block.number.cmp(&block_number))

|

||||

.ok()?,

|

||||

)

|

||||

self.blocks

|

||||

.get(

|

||||

self.blocks

|

||||

.as_slice()

|

||||

.binary_search_by(|block| block.number.cmp(&block_number))

|

||||

.ok()?,

|

||||

)

|

||||

.map(|ptr| ptr.as_ref())

|

||||

}

|

||||

|

||||

/// Returns a block with the corresponding hash, if any.

|

||||

pub fn block_by_hash(&self, block_hash: &Hash256) -> Option<&Eth1Block> {

|

||||

self.by_hash.get(block_hash).map(|ptr| ptr.as_ref())

|

||||

}

|

||||

|

||||

/// Rebuilds the by_hash map

|

||||

pub fn rebuild_by_hash_map(&mut self) {

|

||||

self.by_hash.clear();

|

||||

for block in self.blocks.iter() {

|

||||

self.by_hash.insert(block.hash, block.clone());

|

||||

}

|

||||

}

|

||||

|

||||

/// Insert an `Eth1Snapshot` into `self`, allowing future queries.

|

||||

@@ -161,7 +185,9 @@ impl BlockCache {

|

||||

}

|

||||

}

|

||||

|

||||

self.blocks.push(block);

|

||||

let ptr = Arc::new(block);

|

||||

self.by_hash.insert(ptr.hash, ptr.clone());

|

||||

self.blocks.push(ptr);

|

||||

|

||||

Ok(())

|

||||

}

|

||||

@@ -269,6 +295,8 @@ mod tests {

|

||||

.expect("should add consecutive blocks with duplicate timestamps");

|

||||

}

|

||||

|

||||

let blocks = blocks.into_iter().map(Arc::new).collect::<Vec<_>>();

|

||||

|

||||

assert_eq!(cache.blocks, blocks, "should have added all blocks");

|

||||

}

|

||||

}

|

||||

|

||||

Reference in New Issue

Block a user