* Attestation superstruct changes for EIP 7549 (#5644)

* update

* experiment

* superstruct changes

* revert

* superstruct changes

* fix tests

* indexed attestation

* indexed attestation superstruct

* updated TODOs

* `superstruct` the `AttesterSlashing` (#5636)

* `superstruct` Attester Fork Variants

* Push a little further

* Deal with Encode / Decode of AttesterSlashing

* not so sure about this..

* Stop Encode/Decode Bounds from Propagating Out

* Tons of Changes..

* More Conversions to AttestationRef

* Add AsReference trait (#15)

* Add AsReference trait

* Fix some snafus

* Got it Compiling! :D

* Got Tests Building

* Get beacon chain tests compiling

---------

Co-authored-by: Michael Sproul <micsproul@gmail.com>

* Merge remote-tracking branch 'upstream/unstable' into electra_attestation_changes

* Make EF Tests Fork-Agnostic (#5713)

* Finish EF Test Fork Agnostic (#5714)

* Superstruct `AggregateAndProof` (#5715)

* Upgrade `superstruct` to `0.8.0`

* superstruct `AggregateAndProof`

* Merge remote-tracking branch 'sigp/unstable' into electra_attestation_changes

* cargo fmt

* Merge pull request #5726 from realbigsean/electra_attestation_changes

Merge unstable into Electra attestation changes

* EIP7549 `get_attestation_indices` (#5657)

* get attesting indices electra impl

* fmt

* get tests to pass

* fmt

* fix some beacon chain tests

* fmt

* fix slasher test

* fmt got me again

* fix more tests

* fix tests

* Some small changes (#5739)

* cargo fmt (#5740)

* Sketch op pool changes

* fix get attesting indices (#5742)

* fix get attesting indices

* better errors

* fix compile

* only get committee index once

* Ef test fixes (#5753)

* attestation related ef test fixes

* delete commented out stuff

* Fix Aggregation Pool for Electra (#5754)

* Fix Aggregation Pool for Electra

* Remove Outdated Interface

* fix ssz (#5755)

* Get `electra_op_pool` up to date (#5756)

* fix get attesting indices (#5742)

* fix get attesting indices

* better errors

* fix compile

* only get committee index once

* Ef test fixes (#5753)

* attestation related ef test fixes

* delete commented out stuff

* Fix Aggregation Pool for Electra (#5754)

* Fix Aggregation Pool for Electra

* Remove Outdated Interface

* fix ssz (#5755)

---------

Co-authored-by: realbigsean <sean@sigmaprime.io>

* Revert "Get `electra_op_pool` up to date (#5756)" (#5757)

This reverts commit ab9e58aa3d.

* Merge branch 'electra_attestation_changes' of https://github.com/sigp/lighthouse into electra_op_pool

* Compute on chain aggregate impl (#5752)

* add compute_on_chain_agg impl to op pool changes

* fmt

* get op pool tests to pass

* update the naive agg pool interface (#5760)

* Fix bugs in cross-committee aggregation

* Add comment to max cover optimisation

* Fix assert

* Merge pull request #5749 from sigp/electra_op_pool

Optimise Electra op pool aggregation

* update committee offset

* Fix Electra Fork Choice Tests (#5764)

* Subscribe to the correct subnets for electra attestations (#5782)

* subscribe to the correct att subnets for electra

* subscribe to the correct att subnets for electra

* cargo fmt

* fix slashing handling

* Merge remote-tracking branch 'upstream/unstable'

* Send unagg attestation based on fork

* Publish all aggregates

* just one more check bro plz..

* Merge pull request #5832 from ethDreamer/electra_attestation_changes_merge_unstable

Merge `unstable` into `electra_attestation_changes`

* Merge pull request #5835 from realbigsean/fix-validator-logic

Fix validator logic

* Merge pull request #5816 from realbigsean/electra-attestation-slashing-handling

Electra slashing handling

* Electra attestation changes rm decode impl (#5856)

* Remove Crappy Decode impl for Attestation

* Remove Inefficient Attestation Decode impl

* Implement Schema Upgrade / Downgrade

* Update beacon_node/beacon_chain/src/schema_change/migration_schema_v20.rs

Co-authored-by: Michael Sproul <micsproul@gmail.com>

---------

Co-authored-by: Michael Sproul <micsproul@gmail.com>

* Fix failing attestation tests and misc electra attestation cleanup (#5810)

* - get attestation related beacon chain tests to pass

- observed attestations are now keyed off of data + committee index

- rename op pool attestationref to compactattestationref

- remove unwraps in agg pool and use options instead

- cherry pick some changes from ef-tests-electra

* cargo fmt

* fix failing test

* Revert dockerfile changes

* make committee_index return option

* function args shouldnt be a ref to attestation ref

* fmt

* fix dup imports

---------

Co-authored-by: realbigsean <seananderson33@GMAIL.com>

* fix some todos (#5817)

* Merge branch 'unstable' of https://github.com/sigp/lighthouse into electra_attestation_changes

* add consolidations to merkle calc for inclusion proof

* Remove Duplicate KZG Commitment Merkle Proof Code (#5874)

* Remove Duplicate KZG Commitment Merkle Proof Code

* s/tree_lists/fields/

* Merge branch 'unstable' of https://github.com/sigp/lighthouse into electra_attestation_changes

* fix compile

* Fix slasher tests (#5906)

* Fix electra tests

* Add electra attestations to double vote tests

* Update superstruct to 0.8

* Merge remote-tracking branch 'origin/unstable' into electra_attestation_changes

* Small cleanup in slasher tests

* Clean up Electra observed aggregates (#5929)

* Use consistent key in observed_attestations

* Remove unwraps from observed aggregates

* Merge branch 'unstable' of https://github.com/sigp/lighthouse into electra_attestation_changes

* De-dup attestation constructor logic

* Remove unwraps in Attestation construction

* Dedup match_attestation_data

* Remove outdated TODO

* Use ForkName Ord in fork-choice tests

* Use ForkName Ord in BeaconBlockBody

* Make to_electra not fallible

* Remove TestRandom impl for IndexedAttestation

* Remove IndexedAttestation faulty Decode impl

* Drop TestRandom impl

* Add PendingAttestationInElectra

* Indexed att on disk (#35)

* indexed att on disk

* fix lints

* Update slasher/src/migrate.rs

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

---------

Co-authored-by: Lion - dapplion <35266934+dapplion@users.noreply.github.com>

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

* add electra fork enabled fn to ForkName impl (#36)

* add electra fork enabled fn to ForkName impl

* remove inadvertent file

* Update common/eth2/src/types.rs

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

* Dedup attestation constructor logic in attester cache

* Use if let Ok for committee_bits

* Dedup Attestation constructor code

* Diff reduction in tests

* Fix beacon_chain tests

* Diff reduction

* Use Ord for ForkName in pubsub

* Resolve into_attestation_and_indices todo

* Remove stale TODO

* Fix beacon_chain tests

* Test spec invariant

* Use electra_enabled in pubsub

* Remove get_indexed_attestation_from_signed_aggregate

* Use ok_or instead of if let else

* committees are sorted

* remove dup method `get_indexed_attestation_from_committees`

* Merge pull request #5940 from dapplion/electra_attestation_changes_lionreview

Electra attestations #5712 review

* update default persisted op pool deserialization

* ensure aggregate and proof uses serde untagged on ref

* Fork aware ssz static attestation tests

* Electra attestation changes from Lions review (#5971)

* dedup/cleanup and remove unneeded hashset use

* remove irrelevant TODOs

* Merge branch 'unstable' of https://github.com/sigp/lighthouse into electra_attestation_changes

* Electra attestation changes sean review (#5972)

* instantiate empty bitlist in unreachable code

* clean up error conversion

* fork enabled bool cleanup

* remove a couple todos

* return bools instead of options in `aggregate` and use the result

* delete commented out code

* use map macros in simple transformations

* remove signers_disjoint_from

* get ef tests compiling

* get ef tests compiling

* update intentionally excluded files

* Avoid changing slasher schema for Electra

* Delete slasher schema v4

* Fix clippy

* Fix compilation of beacon_chain tests

* Update database.rs

* Add electra lightclient types

* Update slasher/src/database.rs

* fix imports

* Merge pull request #5980 from dapplion/electra-lightclient

Add electra lightclient types

* Merge pull request #5975 from michaelsproul/electra-slasher-no-migration

Avoid changing slasher schema for Electra

* Update beacon_node/beacon_chain/src/attestation_verification.rs

* Update beacon_node/beacon_chain/src/attestation_verification.rs

* expose builder booster flags in vc, enable options in validator endpoints, update tests

* resolve failing test

* fix issues related to CreateConfig and MoveConfig

* remove unneeded val, change how boost factor flag logic in the vc, add some additional documentation

* fix typos

* fix typos

* assume builder-proosals flag if one of other two vc builder flags are present

* fmt

* typo

* typo

* Fix CLI help text

* Prioritise per validator builder boost configurations over CLI flags.

* Add http test for builder boost factor with process defaults.

* Fix issue with PATCH request

* Add prefer builder proposals

* Add more builder boost factor tests.

---------

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: Jimmy Chen <jchen.tc@gmail.com>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

* multiple broadcast flags

* rewrite with single --broadcast option

* satisfy cargo fmt

* shorten sync-committee-messages

* fix a doc comment and a test

* use strum

* Add broadcast test to simulator

* bring --disable-run-on-all flag back with deprecation notice

## Issue Addressed

Closes#4473 (take 3)

## Proposed Changes

- Send a 202 status code by default for duplicate blocks, instead of 400. This conveys to the caller that the block was published, but makes no guarantees about its validity. Block relays can count this as a success or a failure as they wish.

- For users wanting finer-grained control over which status is returned for duplicates, a flag `--http-duplicate-block-status` can be used to adjust the behaviour. A 400 status can be supplied to restore the old (spec-compliant) behaviour, or a 200 status can be used to silence VCs that warn loudly for non-200 codes (e.g. Lighthouse prior to v4.4.0).

- Update the Lighthouse VC to gracefully handle success codes other than 200. The info message isn't the nicest thing to read, but it covers all bases and isn't a nasty `ERRO`/`CRIT` that will wake anyone up.

## Additional Info

I'm planning to raise a PR to `beacon-APIs` to specify that clients may return 202 for duplicate blocks. Really it would be nice to use some 2xx code that _isn't_ the same as the code for "published but invalid". I think unfortunately there aren't any suitable codes, and maybe the best fit is `409 CONFLICT`. Given that we need to fix this promptly for our release, I think using the 202 code temporarily with configuration strikes a nice compromise.

## Issue Addressed

Addresses #2557

## Proposed Changes

Adds the `lighthouse validator-manager` command, which provides:

- `lighthouse validator-manager create`

- Creates a `validators.json` file and a `deposits.json` (same format as https://github.com/ethereum/staking-deposit-cli)

- `lighthouse validator-manager import`

- Imports validators from a `validators.json` file to the VC via the HTTP API.

- `lighthouse validator-manager move`

- Moves validators from one VC to the other, utilizing only the VC API.

## Additional Info

In 98bcb947c I've reduced some VC `ERRO` and `CRIT` warnings to `WARN` or `DEBG` for the case where a pubkey is missing from the validator store. These were being triggered when we removed a validator but still had it in caches. It seems to me that `UnknownPubkey` will only happen in the case where we've removed a validator, so downgrading the logs is prudent. All the logs are `DEBG` apart from attestations and blocks which are `WARN`. I thought having *some* logging about this condition might help us down the track.

In 856cd7e37d I've made the VC delete the corresponding password file when it's deleting a keystore. This seemed like nice hygiene. Notably, it'll only delete that password file after it scans the validator definitions and finds that no other validator is also using that password file.

## Issue Addressed

NA

## Proposed Changes

Downgrade a `CRIT` to an `ERRO` when there's an `Irrecoverable` error whilst publishing a blinded block.

It's quite common for builders successfully broadcast a block to the network whilst failing to respond to the BN when it publishes a signed, blinded block. The VC is currently raising a `CRIT` when this happens and I think that's excessive.

These changes have the same intent as #4073. In that PR I only managed to remove the `CRIT`s in the BN but missed this one in the VC.

I've also tidied the log messages to:

- Give them all the same title (*"Error whilst producing block"*) to help with grepping.

- Include the `block_slot` so it's easy to look up the slot in an explorer and see if it was actually skipped.

## Additional Info

This PR should not change any logic beyond logging.

It is a well-known fact that IP addresses for beacon nodes used by specific validators can be de-anonymized. There is an assumed risk that a malicious user may attempt to DOS validators when producing blocks to prevent chain growth/liveness.

Although there are a number of ideas put forward to address this, there a few simple approaches we can take to mitigate this risk.

Currently, a Lighthouse user is able to set a number of beacon-nodes that their validator client can connect to. If one beacon node is taken offline, it can fallback to another. Different beacon nodes can use VPNs or rotate IPs in order to mask their IPs.

This PR provides an additional setup option which further mitigates attacks of this kind.

This PR introduces a CLI flag --proposer-only to the beacon node. Setting this flag will configure the beacon node to run with minimal peers and crucially will not subscribe to subnets or sync committees. Therefore nodes of this kind should not be identified as nodes connected to validators of any kind.

It also introduces a CLI flag --proposer-nodes to the validator client. Users can then provide a number of beacon nodes (which may or may not run the --proposer-only flag) that the Validator client will use for block production and propagation only. If these nodes fail, the validator client will fallback to the default list of beacon nodes.

Users are then able to set up a number of beacon nodes dedicated to block proposals (which are unlikely to be identified as validator nodes) and point their validator clients to produce blocks on these nodes and attest on other beacon nodes. An attack attempting to prevent liveness on the eth2 network would then need to preemptively find and attack the proposer nodes which is significantly more difficult than the default setup.

This is a follow on from: #3328

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Closes#3963 (hopefully)

## Proposed Changes

Compute attestation selection proofs gradually each slot rather than in a single `join_all` at the start of each epoch. On a machine with 5k validators this replaces 5k tasks signing 5k proofs with 1 task that signs 5k/32 ~= 160 proofs each slot.

Based on testing with Goerli validators this seems to reduce the average time to produce a signature by preventing Tokio and the OS from falling over each other trying to run hundreds of threads. My testing so far has been with local keystores, which run on a dynamic pool of up to 512 OS threads because they use [`spawn_blocking`](https://docs.rs/tokio/1.11.0/tokio/task/fn.spawn_blocking.html) (and we haven't changed the default).

An earlier version of this PR hyper-optimised the time-per-signature metric to the detriment of the entire system's performance (see the reverted commits). The current PR is conservative in that it avoids touching the attestation service at all. I think there's more optimising to do here, but we can come back for that in a future PR rather than expanding the scope of this one.

The new algorithm for attestation selection proofs is:

- We sign a small batch of selection proofs each slot, for slots up to 8 slots in the future. On average we'll sign one slot's worth of proofs per slot, with an 8 slot lookahead.

- The batch is signed halfway through the slot when there is unlikely to be contention for signature production (blocks are <4s, attestations are ~4-6 seconds, aggregates are 8s+).

## Performance Data

_See first comment for updated graphs_.

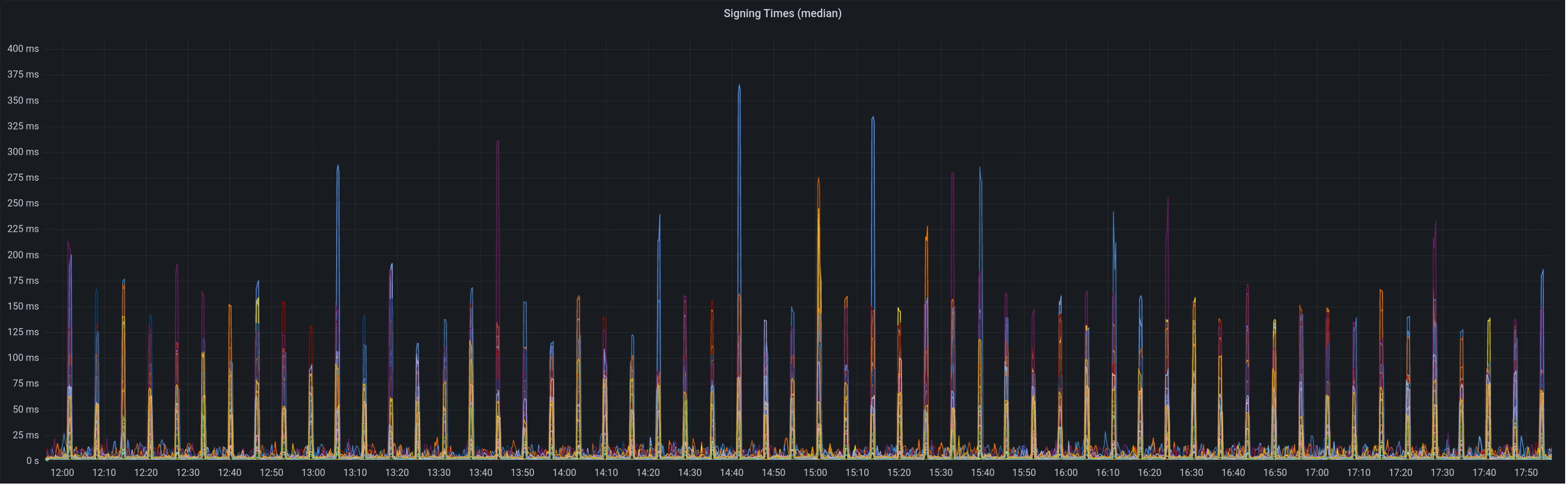

Graph of median signing times before this PR:

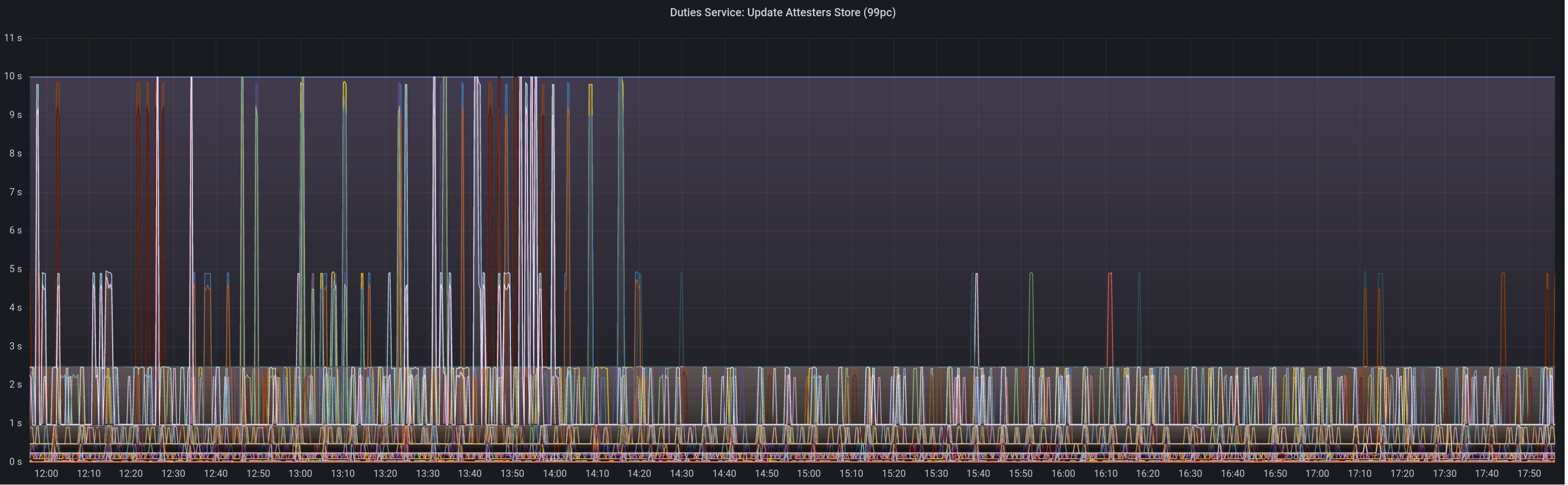

Graph of update attesters metric (includes selection proof signing) before this PR:

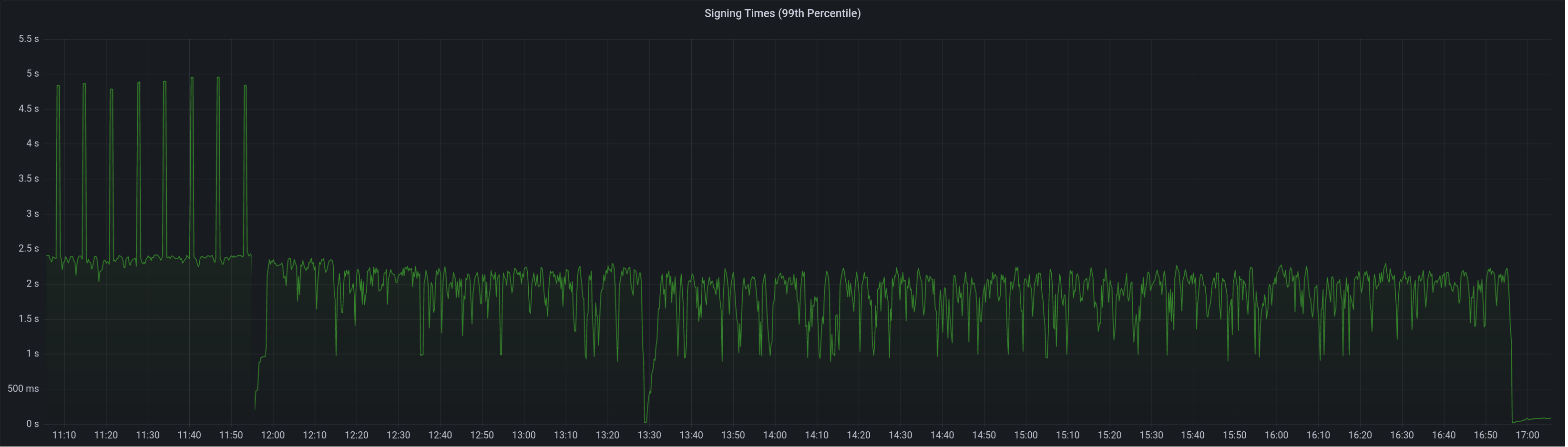

Median signing time after this PR (prototype from 12:00, updated version from 13:30):

99th percentile on signing times (bounded attestation signing from 16:55, now removed):

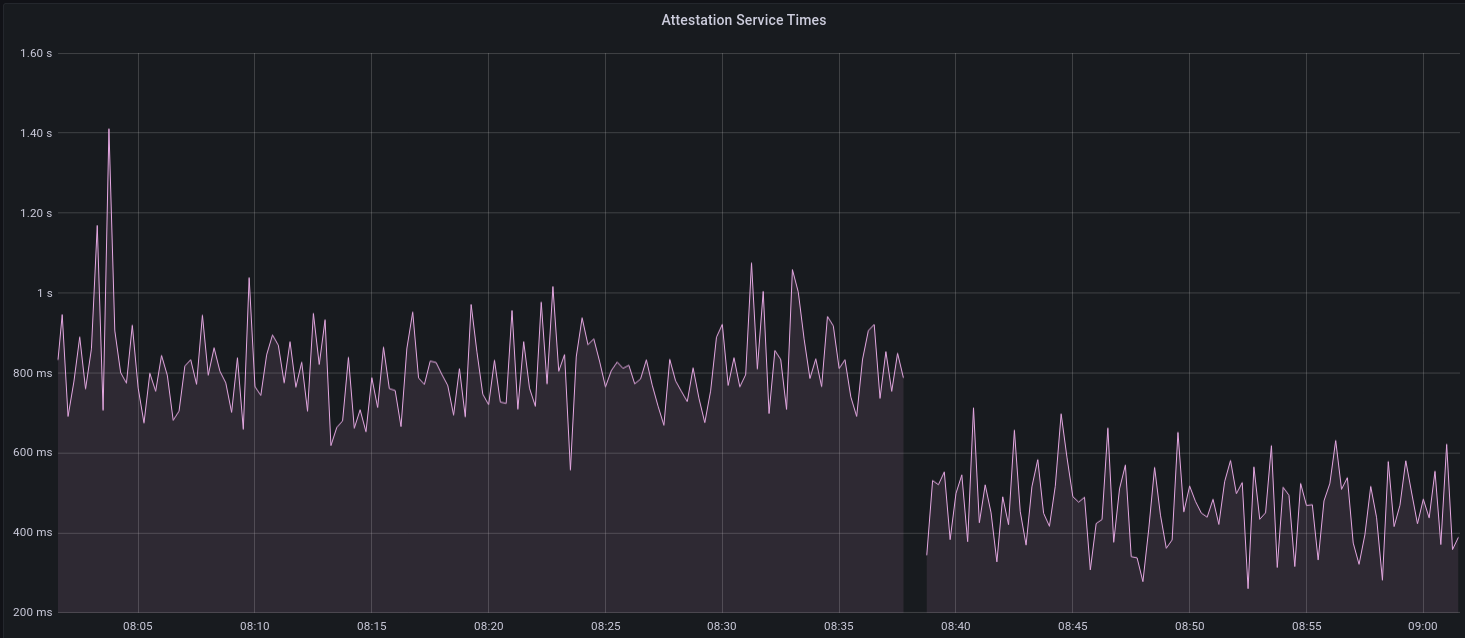

Attester map update timing after this PR:

Selection proof signings per second change:

## Link to late blocks

I believe this is related to the slow block signings because logs from Stakely in #3963 show these two logs almost 5 seconds apart:

> Feb 23 18:56:23.978 INFO Received unsigned block, slot: 5862880, service: block, module: validator_client::block_service:393

> Feb 23 18:56:28.552 INFO Publishing signed block, slot: 5862880, service: block, module: validator_client::block_service:416

The only thing that happens between those two logs is the signing of the block:

0fb58a680d/validator_client/src/block_service.rs (L410-L414)

Helpfully, Stakely noticed this issue without any Lighthouse BNs in the mix, which pointed to a clear issue in the VC.

## TODO

- [x] Further testing on testnet infrastructure.

- [x] Make the attestation signing parallelism configurable.

## Issue Addressed

#3853

## Proposed Changes

Added `INFO` level logs for requesting and receiving the unsigned block.

## Additional Info

Logging for successfully publishing the signed block is already there. And seemingly there is a log for when "We realize we are going to produce a block" in the `start_update_service`: `info!(log, "Block production service started");

`. Is there anywhere else you'd like to see logging around this event?

Co-authored-by: GeemoCandama <104614073+GeemoCandama@users.noreply.github.com>

## Proposed Changes

With proposer boosting implemented (#2822) we have an opportunity to re-org out late blocks.

This PR adds three flags to the BN to control this behaviour:

* `--disable-proposer-reorgs`: turn aggressive re-orging off (it's on by default).

* `--proposer-reorg-threshold N`: attempt to orphan blocks with less than N% of the committee vote. If this parameter isn't set then N defaults to 20% when the feature is enabled.

* `--proposer-reorg-epochs-since-finalization N`: only attempt to re-org late blocks when the number of epochs since finalization is less than or equal to N. The default is 2 epochs, meaning re-orgs will only be attempted when the chain is finalizing optimally.

For safety Lighthouse will only attempt a re-org under very specific conditions:

1. The block being proposed is 1 slot after the canonical head, and the canonical head is 1 slot after its parent. i.e. at slot `n + 1` rather than building on the block from slot `n` we build on the block from slot `n - 1`.

2. The current canonical head received less than N% of the committee vote. N should be set depending on the proposer boost fraction itself, the fraction of the network that is believed to be applying it, and the size of the largest entity that could be hoarding votes.

3. The current canonical head arrived after the attestation deadline from our perspective. This condition was only added to support suppression of forkchoiceUpdated messages, but makes intuitive sense.

4. The block is being proposed in the first 2 seconds of the slot. This gives it time to propagate and receive the proposer boost.

## Additional Info

For the initial idea and background, see: https://github.com/ethereum/consensus-specs/pull/2353#issuecomment-950238004

There is also a specification for this feature here: https://github.com/ethereum/consensus-specs/pull/3034

Co-authored-by: Michael Sproul <micsproul@gmail.com>

Co-authored-by: pawan <pawandhananjay@gmail.com>

## Issue Addressed

#3766

## Proposed Changes

Adds an endpoint to get the graffiti that will be used for the next block proposal for each validator.

## Usage

```bash

curl -H "Authorization: Bearer api-token" http://localhost:9095/lighthouse/ui/graffiti | jq

```

```json

{

"data": {

"0x81283b7a20e1ca460ebd9bbd77005d557370cabb1f9a44f530c4c4c66230f675f8df8b4c2818851aa7d77a80ca5a4a5e": "mr f was here",

"0xa3a32b0f8b4ddb83f1a0a853d81dd725dfe577d4f4c3db8ece52ce2b026eca84815c1a7e8e92a4de3d755733bf7e4a9b": "mr v was here",

"0x872c61b4a7f8510ec809e5b023f5fdda2105d024c470ddbbeca4bc74e8280af0d178d749853e8f6a841083ac1b4db98f": null

}

}

```

## Additional Info

This will only return graffiti that the validator client knows about.

That is from these 3 sources:

1. Graffiti File

2. validator_definitions.yml

3. The `--graffiti` flag on the VC

If the graffiti is set on the BN, it will not be returned. This may warrant an additional endpoint on the BN side which can be used in the event the endpoint returns `null`.

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

Resolves#3516

## Proposed Changes

Adds a beacon fallback function for running a beacon node http query on all available fallbacks instead of returning on a first successful result. Uses the new `run_on_all` method for attestation and sync committee subscriptions.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

Resolves: #3550

Remove the `--strict-fee-recipient` flag. It will cause missed proposals prior to the bellatrix transition.

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Relates to https://github.com/sigp/lighthouse/issues/3416

## Proposed Changes

- Add an `OfflineOnFailure` enum to the `first_success` method for querying beacon nodes so that a val registration request failure from the BN -> builder does not result in the BN being marked offline. This seems important because these failures could be coming directly from a connected relay and actually have no bearing on BN health. Other messages that are sent to a relay have a local fallback so shouldn't result in errors

- Downgrade the following log to a `WARN`

```

ERRO Unable to publish validator registrations to the builder network, error: All endpoints failed https://BN_B => RequestFailed(ServerMessage(ErrorMessage { code: 500, message: "UNHANDLED_ERROR: BuilderMissing", stacktraces: [] })), https://XXXX/ => Unavailable(Offline), [omitted]

```

## Additional Info

I think this change at least improves the UX of having a VC connected to some builder and some non-builder beacon nodes. I think we need to balance potentially alerting users that there is a BN <> VC misconfiguration and also allowing this type of fallback to work.

If we want to fully support this type of configuration we may want to consider adding a flag `--builder-beacon-nodes` and track whether a VC should be making builder queries on a per-beacon node basis. But I think the changes in this PR are independent of that type of extension.

PS: Sorry for the big diff here, it's mostly formatting changes after I added a new arg to a bunch of methods calls.

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

https://github.com/sigp/lighthouse/issues/3091

Extends https://github.com/sigp/lighthouse/pull/3062, adding pre-bellatrix block support on blinded endpoints and allowing the normal proposal flow (local payload construction) on blinded endpoints. This resulted in better fallback logic because the VC will not have to switch endpoints on failure in the BN <> Builder API, the BN can just fallback immediately and without repeating block processing that it shouldn't need to. We can also keep VC fallback from the VC<>BN API's blinded endpoint to full endpoint.

## Proposed Changes

- Pre-bellatrix blocks on blinded endpoints

- Add a new `PayloadCache` to the execution layer

- Better fallback-from-builder logic

## Todos

- [x] Remove VC transition logic

- [x] Add logic to only enable builder flow after Merge transition finalization

- [x] Tests

- [x] Fix metrics

- [x] Rustdocs

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Resolves#3267Resolves#3156

## Proposed Changes

- Move the log for fee recipient checks from proposer cache insertion into block proposal so we are directly checking what we get from the EE

- Only log when there is a discrepancy with the local EE, not when using the builder API. In the `builder-api` branch there is an `info` log when there is a discrepancy, I think it is more likely there will be a difference in fee recipient with the builder api because proposer payments might be made via a transaction in the block. Not really sure what patterns will become commong.

- Upgrade the log from a `warn` to an `error` - not actually sure which we want, but I think this is worth an error because the local EE with default transaction ordering I think should pretty much always use the provided fee recipient

- add a `strict-fee-recipient` flag to the VC so we only sign blocks with matching fee recipients. Falls back from the builder API to the local API if there is a discrepancy .

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

#3141

## Proposed Changes

Changes the algorithm for proposing blocks from

```

For each BN (first success):

- Produce a block

- Sign the block and store its root in the slashing protection DB

- Publish the block

```

to

```

For each BN (first success):

- Produce a block

Sign the block and store its root in the slashing protection DB

For each BN (first success):

- Publish the block

```

Separating the producing from the publishing makes sure that we only add a signed block once to the slashing DB.

## Issue Addressed

MEV boost compatibility

## Proposed Changes

See #2987

## Additional Info

This is blocked on the stabilization of a couple specs, [here](https://github.com/ethereum/beacon-APIs/pull/194) and [here](https://github.com/flashbots/mev-boost/pull/20).

Additional TODO's and outstanding questions

- [ ] MEV boost JWT Auth

- [ ] Will `builder_proposeBlindedBlock` return the revealed payload for the BN to propogate

- [ ] Should we remove `private-tx-proposals` flag and communicate BN <> VC with blinded blocks by default once these endpoints enter the beacon-API's repo? This simplifies merge transition logic.

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Resolves#2612

## Proposed Changes

Implements both the checks mentioned in the original issue.

1. Verifies the `randao_reveal` in the beacon node

2. Cross checks the proposer index after getting back the block from the beacon node.

## Additional info

The block production time increases by ~10x because of the signature verification on the beacon node (based on the `beacon_block_production_process_seconds` metric) when running on a local testnet.

[EIP-3030]: https://eips.ethereum.org/EIPS/eip-3030

[Web3Signer]: https://consensys.github.io/web3signer/web3signer-eth2.html

## Issue Addressed

Resolves#2498

## Proposed Changes

Allows the VC to call out to a [Web3Signer] remote signer to obtain signatures.

## Additional Info

### Making Signing Functions `async`

To allow remote signing, I needed to make all the signing functions `async`. This caused a bit of noise where I had to convert iterators into `for` loops.

In `duties_service.rs` there was a particularly tricky case where we couldn't hold a write-lock across an `await`, so I had to first take a read-lock, then grab a write-lock.

### Move Signing from Core Executor

Whilst implementing this feature, I noticed that we signing was happening on the core tokio executor. I suspect this was causing the executor to temporarily lock and occasionally trigger some HTTP timeouts (and potentially SQL pool timeouts, but I can't verify this). Since moving all signing into blocking tokio tasks, I noticed a distinct drop in the "atttestations_http_get" metric on a Prater node:

I think this graph indicates that freeing the core executor allows the VC to operate more smoothly.

### Refactor TaskExecutor

I noticed that the `TaskExecutor::spawn_blocking_handle` function would fail to spawn tasks if it were unable to obtain handles to some metrics (this can happen if the same metric is defined twice). It seemed that a more sensible approach would be to keep spawning tasks, but without metrics. To that end, I refactored the function so that it would still function without metrics. There are no other changes made.

## TODO

- [x] Restructure to support multiple signing methods.

- [x] Add calls to remote signer from VC.

- [x] Documentation

- [x] Test all endpoints

- [x] Test HTTPS certificate

- [x] Allow adding remote signer validators via the API

- [x] Add Altair support via [21.8.1-rc1](https://github.com/ConsenSys/web3signer/releases/tag/21.8.1-rc1)

- [x] Create issue to start using latest version of web3signer. (See #2570)

## Notes

- ~~Web3Signer doesn't yet support the Altair fork for Prater. See https://github.com/ConsenSys/web3signer/issues/423.~~

- ~~There is not yet a release of Web3Signer which supports Altair blocks. See https://github.com/ConsenSys/web3signer/issues/391.~~

## Issue Addressed

Resolves#2069

## Proposed Changes

- Adds a `--doppelganger-detection` flag

- Adds a `lighthouse/seen_validators` endpoint, which will make it so the lighthouse VC is not interopable with other client beacon nodes if the `--doppelganger-detection` flag is used, but hopefully this will become standardized. Relevant Eth2 API repo issue: https://github.com/ethereum/eth2.0-APIs/issues/64

- If the `--doppelganger-detection` flag is used, the VC will wait until the beacon node is synced, and then wait an additional 2 epochs. The reason for this is to make sure the beacon node is able to subscribe to the subnets our validators should be attesting on. I think an alternative would be to have the beacon node subscribe to all subnets for 2+ epochs on startup by default.

## Additional Info

I'd like to add tests and would appreciate feedback.

TODO: handle validators started via the API, potentially make this default behavior

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Resolves#2313

## Proposed Changes

Provide `BeaconNodeHttpClient` with a dedicated `Timeouts` struct.

This will allow granular adjustment of the timeout duration for different calls made from the VC to the BN. These can either be a constant value, or as a ratio of the slot duration.

Improve timeout performance by using these adjusted timeout duration's only whenever a fallback endpoint is available.

Add a CLI flag called `use-long-timeouts` to revert to the old behavior.

## Additional Info

Additionally set the default `BeaconNodeHttpClient` timeouts to the be the slot duration of the network, rather than a constant 12 seconds. This will allow it to adjust to different network specifications.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

Implement the consensus changes necessary for the upcoming Altair hard fork.

## Additional Info

This is quite a heavy refactor, with pivotal types like the `BeaconState` and `BeaconBlock` changing from structs to enums. This ripples through the whole codebase with field accesses changing to methods, e.g. `state.slot` => `state.slot()`.

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

Closes#2052

## Proposed Changes

- Refactor the attester/proposer duties endpoints in the BN

- Performance improvements

- Fixes some potential inconsistencies with the dependent root fields.

- Removes `http_api::beacon_proposer_cache` and just uses the one on the `BeaconChain` instead.

- Move the code for the proposer/attester duties endpoints into separate files, for readability.

- Refactor the `DutiesService` in the VC

- Required to reduce the delay on broadcasting new blocks.

- Gets rid of the `ValidatorDuty` shim struct that came about when we adopted the standard API.

- Separate block/attestation duty tasks so that they don't block each other when one is slow.

- In the VC, use `PublicKeyBytes` to represent validators instead of `PublicKey`. `PublicKey` is a legit crypto object whilst `PublicKeyBytes` is just a byte-array, it's much faster to clone/hash `PublicKeyBytes` and this change has had a significant impact on runtimes.

- Unfortunately this has created lots of dust changes.

- In the BN, store `PublicKeyBytes` in the `beacon_proposer_cache` and allow access to them. The HTTP API always sends `PublicKeyBytes` over the wire and the conversion from `PublicKey` -> `PublickeyBytes` is non-trivial, especially when queries have 100s/1000s of validators (like Pyrmont).

- Add the `state_processing::state_advance` mod which dedups a lot of the "apply `n` skip slots to the state" code.

- This also fixes a bug with some functions which were failing to include a state root as per [this comment](072695284f/consensus/state_processing/src/state_advance.rs (L69-L74)). I couldn't find any instance of this bug that resulted in anything more severe than keying a shuffling cache by the wrong block root.

- Swap the VC block service to use `mpsc` from `tokio` instead of `futures`. This is consistent with the rest of the code base.

~~This PR *reduces* the size of the codebase 🎉~~ It *used* to reduce the size of the code base before I added more comments.

## Observations on Prymont

- Proposer duties times down from peaks of 450ms to consistent <1ms.

- Current epoch attester duties times down from >1s peaks to a consistent 20-30ms.

- Block production down from +600ms to 100-200ms.

## Additional Info

- ~~Blocked on #2241~~

- ~~Blocked on #2234~~

## TODO

- [x] ~~Refactor this into some smaller PRs?~~ Leaving this as-is for now.

- [x] Address `per_slot_processing` roots.

- [x] Investigate slow next epoch times. Not getting added to cache on block processing?

- [x] Consider [this](072695284f/beacon_node/store/src/hot_cold_store.rs (L811-L812)) in the scenario of replacing the state roots

Co-authored-by: pawan <pawandhananjay@gmail.com>

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

Resolves#1944

## Proposed Changes

Adds a "graffiti" key to the `validator_definitions.yml`. Setting the key will override anything passed through the validator `--graffiti` flag.

Returns an error if the value for the graffiti key is > 32 bytes instead of silently truncating.

## Issue Addressed

- Resolves#1883

## Proposed Changes

This follows on from @blacktemplar's work in #2018.

- Allows the VC to connect to multiple BN for redundancy.

- Update the simulator so some nodes always need to rely on their fallback.

- Adds some extra deprecation warnings for `--eth1-endpoint`

- Pass `SignatureBytes` as a reference instead of by value.

## Additional Info

NA

Co-authored-by: blacktemplar <blacktemplar@a1.net>