mirror of

https://github.com/sigp/lighthouse.git

synced 2026-03-15 10:52:43 +00:00

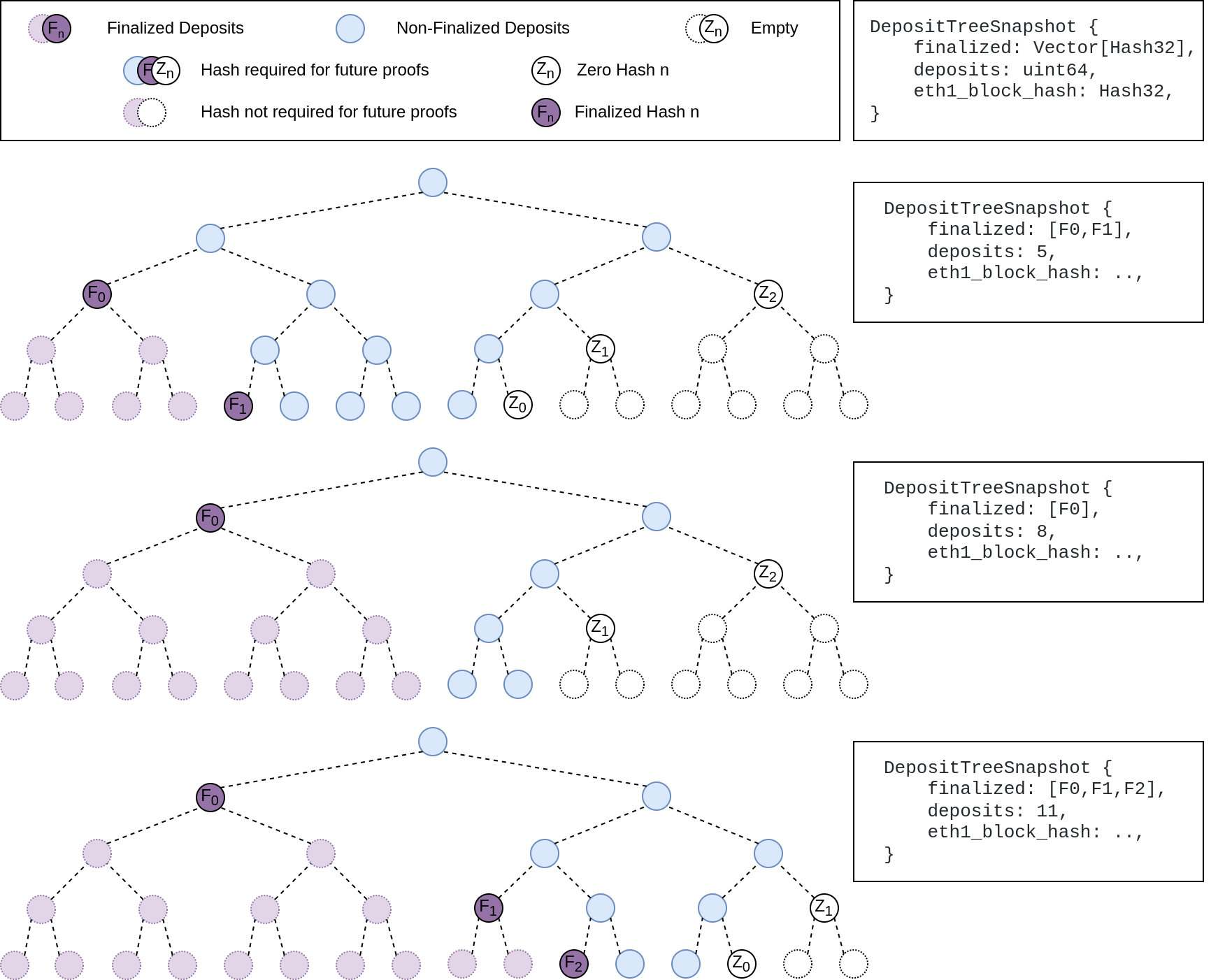

## Summary The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported. This has three benefits: 1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree. 2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree. 3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us. ## More Details Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`  ## Other Considerations I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions. Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

120 lines

3.4 KiB

Rust

120 lines

3.4 KiB

Rust

use crate::{DBColumn, Error, StoreItem};

|

|

use serde_derive::{Deserialize, Serialize};

|

|

use ssz::{Decode, Encode};

|

|

use ssz_derive::{Decode, Encode};

|

|

use types::{Checkpoint, Hash256, Slot};

|

|

|

|

pub const CURRENT_SCHEMA_VERSION: SchemaVersion = SchemaVersion(13);

|

|

|

|

// All the keys that get stored under the `BeaconMeta` column.

|

|

//

|

|

// We use `repeat_byte` because it's a const fn.

|

|

pub const SCHEMA_VERSION_KEY: Hash256 = Hash256::repeat_byte(0);

|

|

pub const CONFIG_KEY: Hash256 = Hash256::repeat_byte(1);

|

|

pub const SPLIT_KEY: Hash256 = Hash256::repeat_byte(2);

|

|

pub const PRUNING_CHECKPOINT_KEY: Hash256 = Hash256::repeat_byte(3);

|

|

pub const COMPACTION_TIMESTAMP_KEY: Hash256 = Hash256::repeat_byte(4);

|

|

pub const ANCHOR_INFO_KEY: Hash256 = Hash256::repeat_byte(5);

|

|

|

|

#[derive(Debug, Clone, Copy, PartialEq, Eq, PartialOrd, Ord)]

|

|

pub struct SchemaVersion(pub u64);

|

|

|

|

impl SchemaVersion {

|

|

pub fn as_u64(self) -> u64 {

|

|

self.0

|

|

}

|

|

}

|

|

|

|

impl StoreItem for SchemaVersion {

|

|

fn db_column() -> DBColumn {

|

|

DBColumn::BeaconMeta

|

|

}

|

|

|

|

fn as_store_bytes(&self) -> Vec<u8> {

|

|

self.0.as_ssz_bytes()

|

|

}

|

|

|

|

fn from_store_bytes(bytes: &[u8]) -> Result<Self, Error> {

|

|

Ok(SchemaVersion(u64::from_ssz_bytes(bytes)?))

|

|

}

|

|

}

|

|

|

|

/// The checkpoint used for pruning the database.

|

|

///

|

|

/// Updated whenever pruning is successful.

|

|

#[derive(Debug, Clone, Copy, PartialEq, Eq)]

|

|

pub struct PruningCheckpoint {

|

|

pub checkpoint: Checkpoint,

|

|

}

|

|

|

|

impl StoreItem for PruningCheckpoint {

|

|

fn db_column() -> DBColumn {

|

|

DBColumn::BeaconMeta

|

|

}

|

|

|

|

fn as_store_bytes(&self) -> Vec<u8> {

|

|

self.checkpoint.as_ssz_bytes()

|

|

}

|

|

|

|

fn from_store_bytes(bytes: &[u8]) -> Result<Self, Error> {

|

|

Ok(PruningCheckpoint {

|

|

checkpoint: Checkpoint::from_ssz_bytes(bytes)?,

|

|

})

|

|

}

|

|

}

|

|

|

|

/// The last time the database was compacted.

|

|

pub struct CompactionTimestamp(pub u64);

|

|

|

|

impl StoreItem for CompactionTimestamp {

|

|

fn db_column() -> DBColumn {

|

|

DBColumn::BeaconMeta

|

|

}

|

|

|

|

fn as_store_bytes(&self) -> Vec<u8> {

|

|

self.0.as_ssz_bytes()

|

|

}

|

|

|

|

fn from_store_bytes(bytes: &[u8]) -> Result<Self, Error> {

|

|

Ok(CompactionTimestamp(u64::from_ssz_bytes(bytes)?))

|

|

}

|

|

}

|

|

|

|

/// Database parameters relevant to weak subjectivity sync.

|

|

#[derive(Debug, PartialEq, Eq, Clone, Encode, Decode, Serialize, Deserialize)]

|

|

pub struct AnchorInfo {

|

|

/// The slot at which the anchor state is present and which we cannot revert.

|

|

pub anchor_slot: Slot,

|

|

/// The slot from which historical blocks are available (>=).

|

|

pub oldest_block_slot: Slot,

|

|

/// The block root of the next block that needs to be added to fill in the history.

|

|

///

|

|

/// Zero if we know all blocks back to genesis.

|

|

pub oldest_block_parent: Hash256,

|

|

/// The slot from which historical states are available (>=).

|

|

pub state_upper_limit: Slot,

|

|

/// The slot before which historical states are available (<=).

|

|

pub state_lower_limit: Slot,

|

|

}

|

|

|

|

impl AnchorInfo {

|

|

/// Returns true if the block backfill has completed.

|

|

pub fn block_backfill_complete(&self) -> bool {

|

|

self.oldest_block_slot == 0

|

|

}

|

|

}

|

|

|

|

impl StoreItem for AnchorInfo {

|

|

fn db_column() -> DBColumn {

|

|

DBColumn::BeaconMeta

|

|

}

|

|

|

|

fn as_store_bytes(&self) -> Vec<u8> {

|

|

self.as_ssz_bytes()

|

|

}

|

|

|

|

fn from_store_bytes(bytes: &[u8]) -> Result<Self, Error> {

|

|

Ok(Self::from_ssz_bytes(bytes)?)

|

|

}

|

|

}

|